Categories Machine learning

7 days

30 days

All time

Recent

Popular

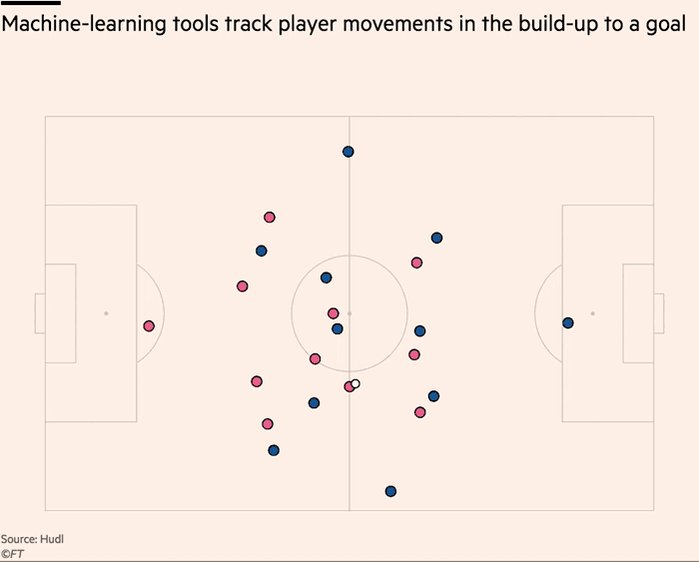

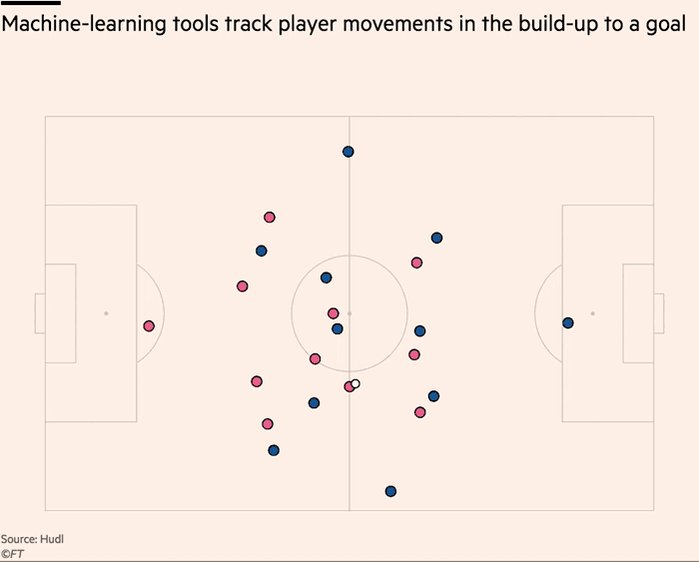

Really enjoyed digging into recent innovations in the football analytics industry.

>10 hours of interviews for this w/ a dozen or so of top firms in the game. Really grateful to everyone who gave up time & insights, even those that didnt make final cut 🙇♂️ https://t.co/9YOSrl8TdN

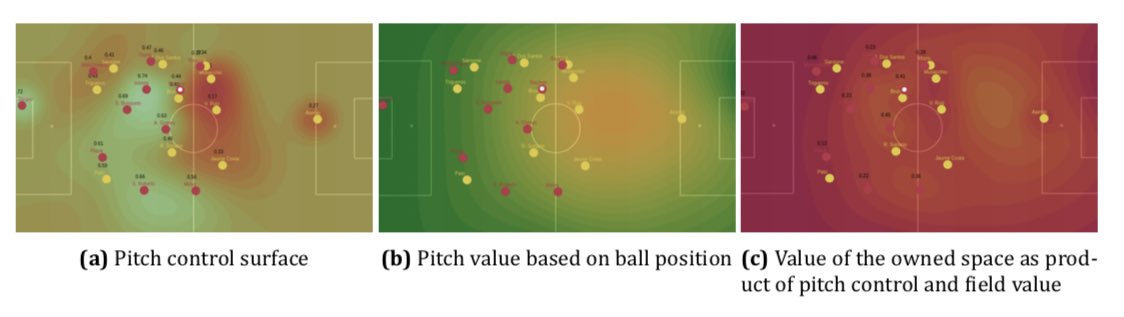

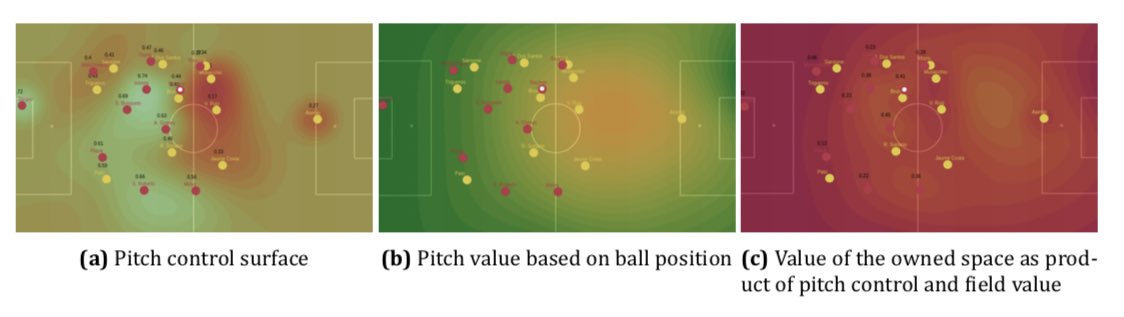

For avoidance of doubt, leading tracking analytics firms are now well beyond voronoi diagrams, using more granular measures to assess control and value of space.

This @JaviOnData & @LukeBornn paper from 2018 referenced in the piece demonstrates one method https://t.co/Hx8XTUMpJ5

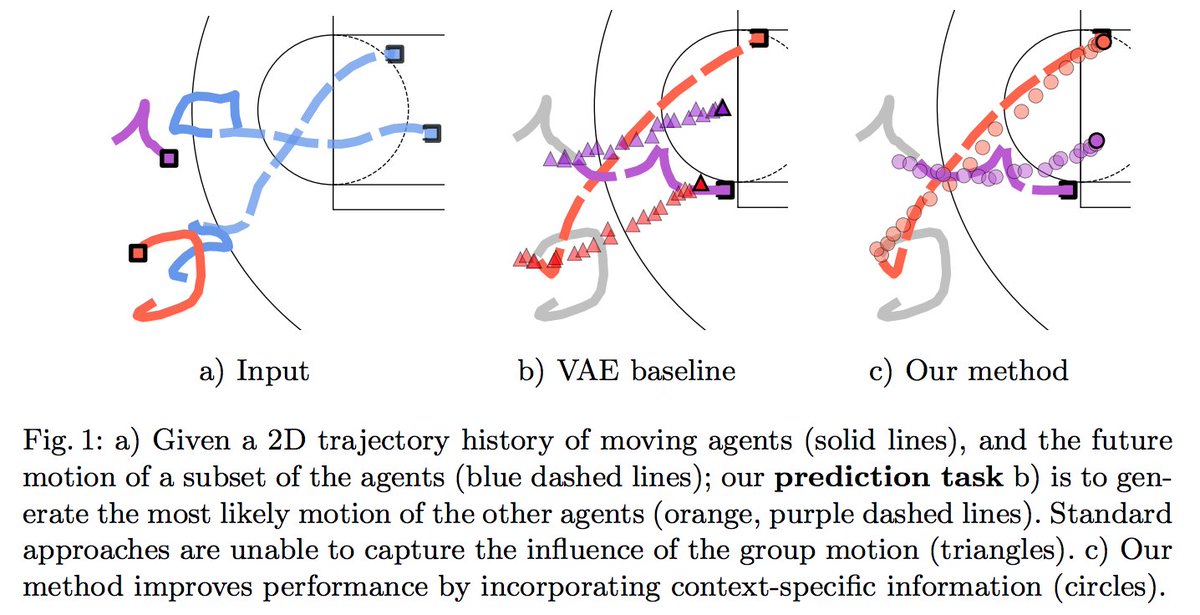

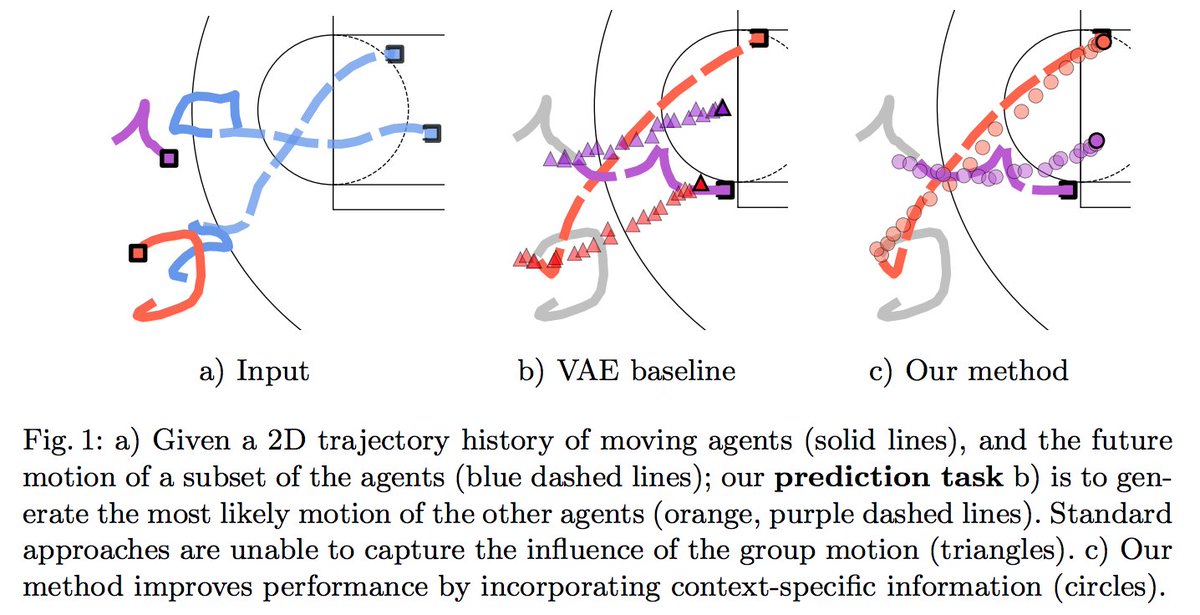

Bit of this that I nerded out on the most is "ghosting" — technique used by @counterattack9 & co @stats_insights, among others.

Deep learning models predict how specific players — operating w/in specific setups — will move & execute actions. A paper here: https://t.co/9qrKvJ70EN

So many use-cases:

1/ Quickly & automatically spot situations where opponent's defence is abnormally vulnerable. Drill those to death in training.

2/ Swap target player B in for current player A, and simulate. How does target player strengthen/weaken team? In specific situations?

>10 hours of interviews for this w/ a dozen or so of top firms in the game. Really grateful to everyone who gave up time & insights, even those that didnt make final cut 🙇♂️ https://t.co/9YOSrl8TdN

For avoidance of doubt, leading tracking analytics firms are now well beyond voronoi diagrams, using more granular measures to assess control and value of space.

This @JaviOnData & @LukeBornn paper from 2018 referenced in the piece demonstrates one method https://t.co/Hx8XTUMpJ5

Bit of this that I nerded out on the most is "ghosting" — technique used by @counterattack9 & co @stats_insights, among others.

Deep learning models predict how specific players — operating w/in specific setups — will move & execute actions. A paper here: https://t.co/9qrKvJ70EN

So many use-cases:

1/ Quickly & automatically spot situations where opponent's defence is abnormally vulnerable. Drill those to death in training.

2/ Swap target player B in for current player A, and simulate. How does target player strengthen/weaken team? In specific situations?

This is a Twitter series on #FoundationsOfML.

❓ Today, I want to start discussing the different types of Machine Learning flavors we can find.

This is a very high-level overview. In later threads, we'll dive deeper into each paradigm... 👇🧵

Last time we talked about how Machine Learning works.

Basically, it's about having some source of experience E for solving a given task T, that allows us to find a program P which is (hopefully) optimal w.r.t. some metric

According to the nature of that experience, we can define different formulations, or flavors, of the learning process.

A useful distinction is whether we have an explicit goal or desired output, which gives rise to the definitions of 1️⃣ Supervised and 2️⃣ Unsupervised Learning 👇

1️⃣ Supervised Learning

In this formulation, the experience E is a collection of input/output pairs, and the task T is defined as a function that produces the right output for any given input.

👉 The underlying assumption is that there is some correlation (or, in general, a computable relation) between the structure of an input and its corresponding output and that it is possible to infer that function or mapping from a sufficiently large number of examples.

❓ Today, I want to start discussing the different types of Machine Learning flavors we can find.

This is a very high-level overview. In later threads, we'll dive deeper into each paradigm... 👇🧵

Last time we talked about how Machine Learning works.

Basically, it's about having some source of experience E for solving a given task T, that allows us to find a program P which is (hopefully) optimal w.r.t. some metric

I'm starting a Twitter series on #FoundationsOfML. Today, I want to answer this simple question.

— Alejandro Piad Morffis (@AlejandroPiad) January 12, 2021

\u2753 What is Machine Learning?

This is my preferred way of explaining it... \U0001f447\U0001f9f5

According to the nature of that experience, we can define different formulations, or flavors, of the learning process.

A useful distinction is whether we have an explicit goal or desired output, which gives rise to the definitions of 1️⃣ Supervised and 2️⃣ Unsupervised Learning 👇

1️⃣ Supervised Learning

In this formulation, the experience E is a collection of input/output pairs, and the task T is defined as a function that produces the right output for any given input.

👉 The underlying assumption is that there is some correlation (or, in general, a computable relation) between the structure of an input and its corresponding output and that it is possible to infer that function or mapping from a sufficiently large number of examples.

Happy 2⃣0⃣2⃣1⃣ to all.🎇

For any Learning machines out there, here are a list of my fav online investing resources. Feel free to add yours.

Let's dive in.

⬇️⬇️⬇️

Investing Services

✔️ @themotleyfool - @TMFStockAdvisor & @TMFRuleBreakers services

✔️ @7investing

✔️ @investing_city

https://t.co/9aUK1Tclw4

✔️ @MorningstarInc Premium

✔️ @SeekingAlpha Marketplaces (Check your area of interest, Free trials, Quality, track record...)

General Finance/Investing

✔️ @morganhousel

https://t.co/f1joTRaG55

✔️ @dollarsanddata

https://t.co/Mj1owkzRc8

✔️ @awealthofcs

https://t.co/y81KHfh8cn

✔️ @iancassel

https://t.co/KEMTBHa8Qk

✔️ @InvestorAmnesia

https://t.co/zFL3H2dk6s

✔️

Tech focused

✔️ @stratechery

https://t.co/VsNwRStY9C

✔️ @bgurley

https://t.co/NKXGtaB6HQ

✔️ @CBinsights

https://t.co/H77hNp2X5R

✔️ @benedictevans

https://t.co/nyOlasCY1o

✔️

Tech Deep dives

✔️ @StackInvesting

https://t.co/WQ1yBYzT2m

✔️ @hhhypergrowth

https://t.co/kcLKITRLz1

✔️ @Beth_Kindig

https://t.co/CjhLRdP7Rh

✔️ @SeifelCapital

https://t.co/CXXG5PY0xX

✔️ @borrowed_ideas

For any Learning machines out there, here are a list of my fav online investing resources. Feel free to add yours.

Let's dive in.

⬇️⬇️⬇️

Investing Services

✔️ @themotleyfool - @TMFStockAdvisor & @TMFRuleBreakers services

✔️ @7investing

✔️ @investing_city

https://t.co/9aUK1Tclw4

✔️ @MorningstarInc Premium

✔️ @SeekingAlpha Marketplaces (Check your area of interest, Free trials, Quality, track record...)

General Finance/Investing

✔️ @morganhousel

https://t.co/f1joTRaG55

✔️ @dollarsanddata

https://t.co/Mj1owkzRc8

✔️ @awealthofcs

https://t.co/y81KHfh8cn

✔️ @iancassel

https://t.co/KEMTBHa8Qk

✔️ @InvestorAmnesia

https://t.co/zFL3H2dk6s

✔️

Tech focused

✔️ @stratechery

https://t.co/VsNwRStY9C

✔️ @bgurley

https://t.co/NKXGtaB6HQ

✔️ @CBinsights

https://t.co/H77hNp2X5R

✔️ @benedictevans

https://t.co/nyOlasCY1o

✔️

Tech Deep dives

✔️ @StackInvesting

https://t.co/WQ1yBYzT2m

✔️ @hhhypergrowth

https://t.co/kcLKITRLz1

✔️ @Beth_Kindig

https://t.co/CjhLRdP7Rh

✔️ @SeifelCapital

https://t.co/CXXG5PY0xX

✔️ @borrowed_ideas

Do you want to learn the maths for machine learning but don't know where to start?

This thread is for you.

🧵👇

The guide that you will see below is based on resources that I came across, and some of my experiences over the past 2 years or so.

I use these resources and they will (hopefully) help you in understanding the theoretical aspects of machine learning very well.

Before diving into maths, I suggest first having solid programming skills in Python.

Read this thread for more

These are topics of math you'll have to focus on for machine learning👇

- Trigonometry & Algebra

These are the main pre-requisites for other topics on this list.

(There are other pre-requites but these are the most common)

- Linear Algebra

To manipulate and represent data.

- Calculus

To train and optimize your machine learning model, this is very important.

This thread is for you.

🧵👇

The guide that you will see below is based on resources that I came across, and some of my experiences over the past 2 years or so.

I use these resources and they will (hopefully) help you in understanding the theoretical aspects of machine learning very well.

Before diving into maths, I suggest first having solid programming skills in Python.

Read this thread for more

Are you planning to learn Python for machine learning this year?

— Pratham Prasoon (@PrasoonPratham) February 13, 2021

Here's everything you need to get started.

\U0001f9f5\U0001f447

These are topics of math you'll have to focus on for machine learning👇

- Trigonometry & Algebra

These are the main pre-requisites for other topics on this list.

(There are other pre-requites but these are the most common)

- Linear Algebra

To manipulate and represent data.

- Calculus

To train and optimize your machine learning model, this is very important.