Study this thread and then check the VIX charts and Nifty charts from 1st Oct 2018. There is a reason I am retweeting this now :)

1. IV > HV

— Subhadip Nandy (@SubhadipNandy16) October 5, 2018

2. High IVR

3. Sell strangles 2SD away

This is the crux of all complicated option selling strategies . Nothing so simple can work across all market conditions .

More from Subhadip Nandy

My presentation on Money Management was based on a lot of sources as I mentioned. For traders interested on those sources , here they are

#OptimalF

Portfolio Management Formulas: Mathematical Trading Methods for the Futures, Options, and Stock Markets by Ralph Vince

The Mathematics of Money Management: Risk Analysis Techniques for Traders by Ralph Vince

#SecureF

#FixedRatio

The Trading Game: Playing by the Numbers to Make Millions by Ryan Jones

https://t.co/U0c65EbEog.

#OptimalF

Portfolio Management Formulas: Mathematical Trading Methods for the Futures, Options, and Stock Markets by Ralph Vince

The Mathematics of Money Management: Risk Analysis Techniques for Traders by Ralph Vince

#SecureF

#FixedRatio

The Trading Game: Playing by the Numbers to Make Millions by Ryan Jones

https://t.co/U0c65EbEog.

More from All

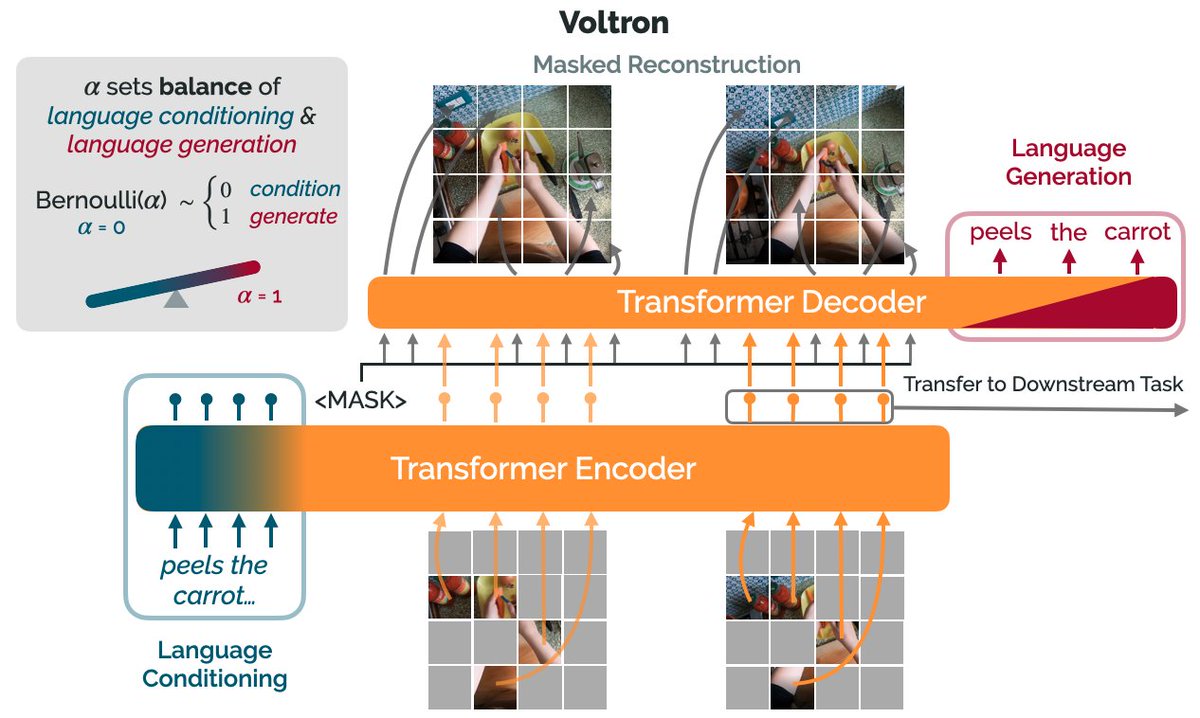

How can we use language supervision to learn better visual representations for robotics?

Introducing Voltron: Language-Driven Representation Learning for Robotics!

Paper: https://t.co/gIsRPtSjKz

Models: https://t.co/NOB3cpATYG

Evaluation: https://t.co/aOzQu95J8z

🧵👇(1 / 12)

Videos of humans performing everyday tasks (Something-Something-v2, Ego4D) offer a rich and diverse resource for learning representations for robotic manipulation.

Yet, an underused part of these datasets are the rich, natural language annotations accompanying each video. (2/12)

The Voltron framework offers a simple way to use language supervision to shape representation learning, building off of prior work in representations for robotics like MVP (https://t.co/Pb0mk9hb4i) and R3M (https://t.co/o2Fkc3fP0e).

The secret is *balance* (3/12)

Starting with a masked autoencoder over frames from these video clips, make a choice:

1) Condition on language and improve our ability to reconstruct the scene.

2) Generate language given the visual representation and improve our ability to describe what's happening. (4/12)

By trading off *conditioning* and *generation* we show that we can learn 1) better representations than prior methods, and 2) explicitly shape the balance of low and high-level features captured.

Why is the ability to shape this balance important? (5/12)

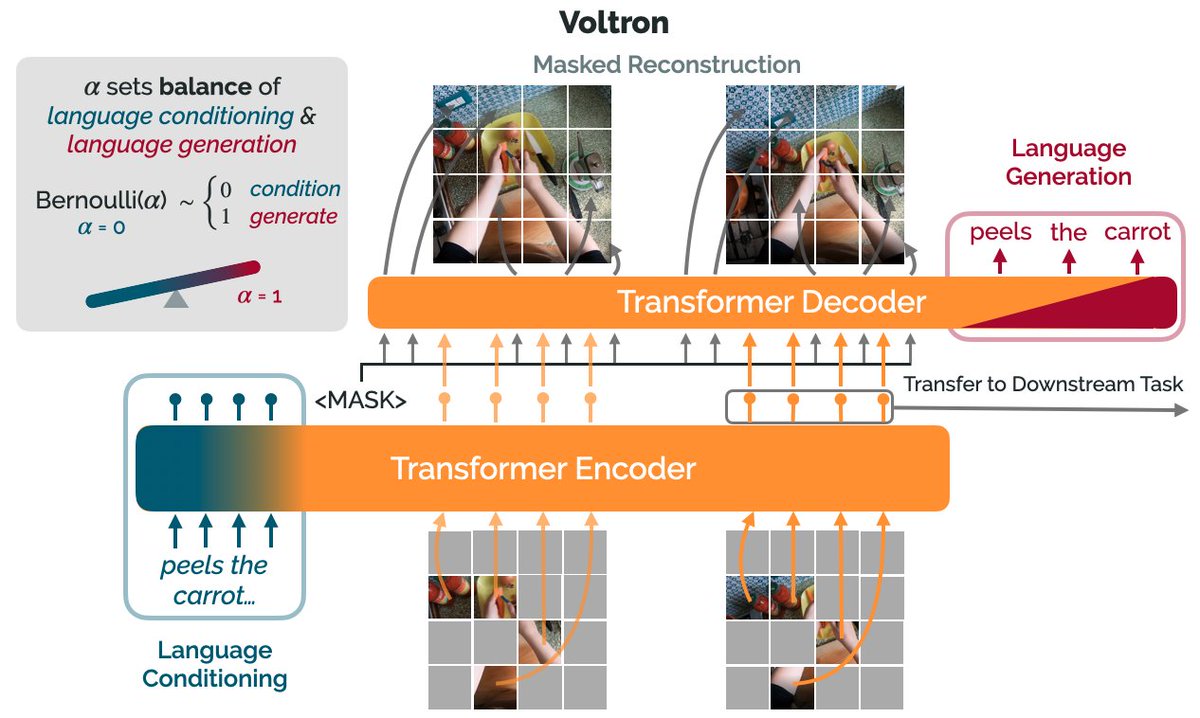

Introducing Voltron: Language-Driven Representation Learning for Robotics!

Paper: https://t.co/gIsRPtSjKz

Models: https://t.co/NOB3cpATYG

Evaluation: https://t.co/aOzQu95J8z

🧵👇(1 / 12)

Videos of humans performing everyday tasks (Something-Something-v2, Ego4D) offer a rich and diverse resource for learning representations for robotic manipulation.

Yet, an underused part of these datasets are the rich, natural language annotations accompanying each video. (2/12)

The Voltron framework offers a simple way to use language supervision to shape representation learning, building off of prior work in representations for robotics like MVP (https://t.co/Pb0mk9hb4i) and R3M (https://t.co/o2Fkc3fP0e).

The secret is *balance* (3/12)

Starting with a masked autoencoder over frames from these video clips, make a choice:

1) Condition on language and improve our ability to reconstruct the scene.

2) Generate language given the visual representation and improve our ability to describe what's happening. (4/12)

By trading off *conditioning* and *generation* we show that we can learn 1) better representations than prior methods, and 2) explicitly shape the balance of low and high-level features captured.

Why is the ability to shape this balance important? (5/12)