Last up in Privacy Tech for #enigma2021, @xchatty speaking about "IMPLEMENTING DIFFERENTIAL PRIVACY FOR THE 2020

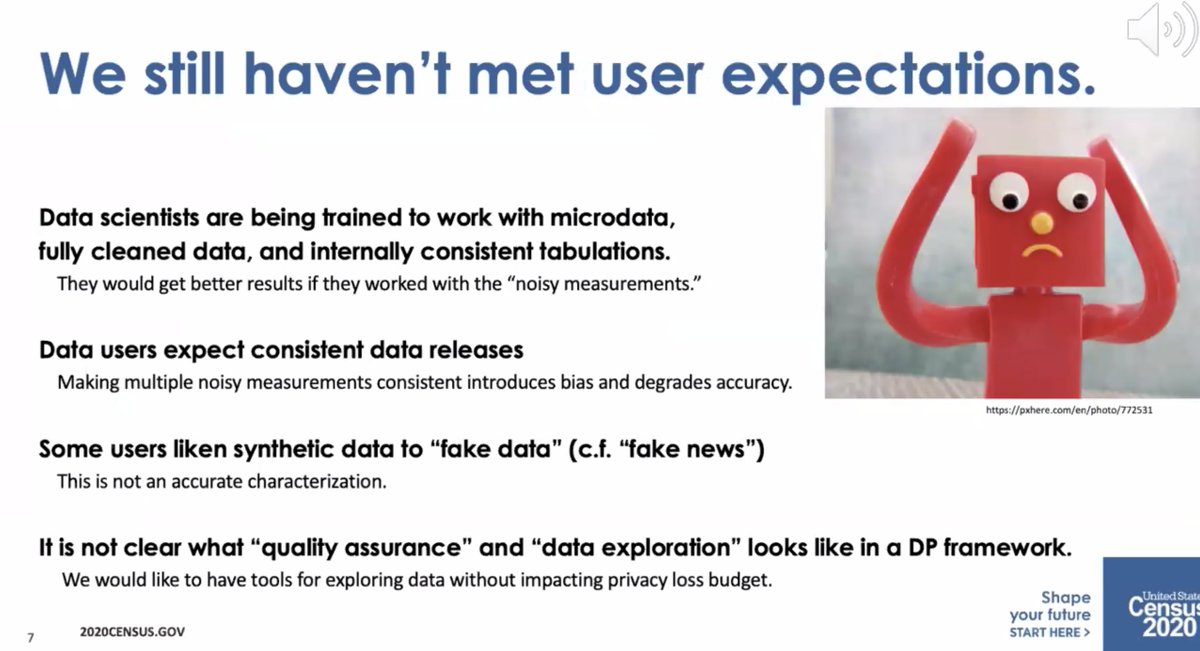

* Data users expect consistent data releases

* Some people call synthetic data "fake data" like

"fake news"

* It's not clear what "quality assurance" and "data exploration" means in a DP framework

* required to collect it by the constitution

* but required to maintain privacy by law

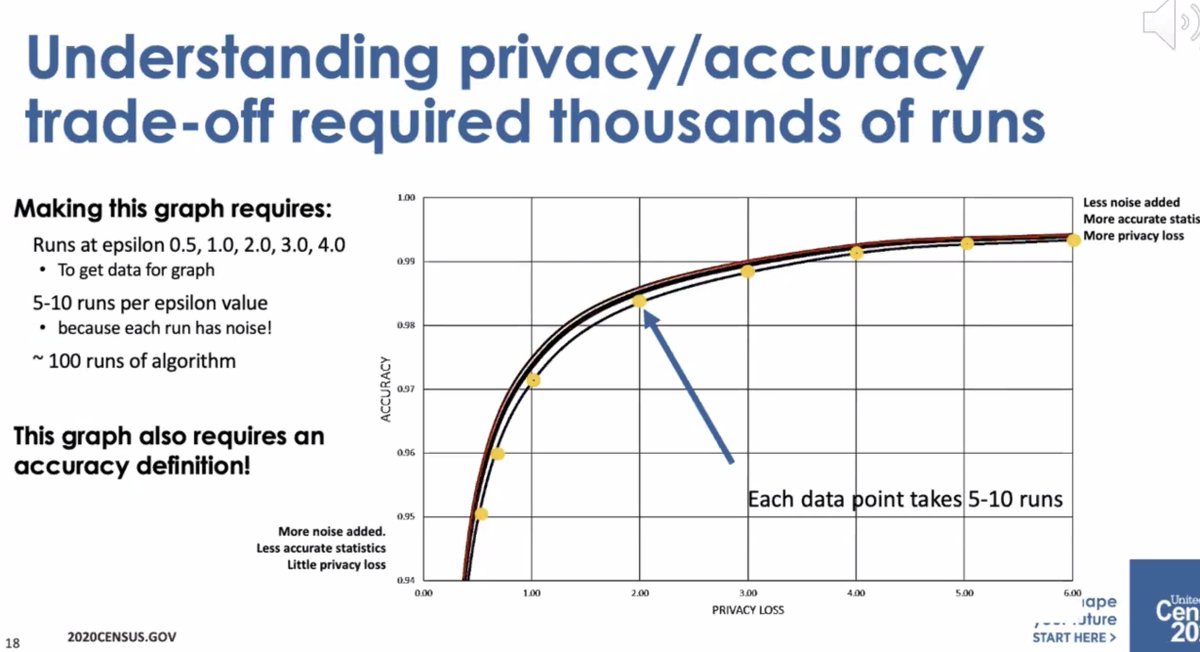

* differential privacy is open and we can talk about privacy loss/accuracy tradeoff

* swapping assumed limitations of the attackers (e.g. limited computational power)

Change in the meaning of "privacy" as relative -- it requires a lot of explanation and overcoming organizational barriers.

* different groups at the Census thought that meant different things

* before, states were processed as they came in. Differential privacy requires everything be computed on at once

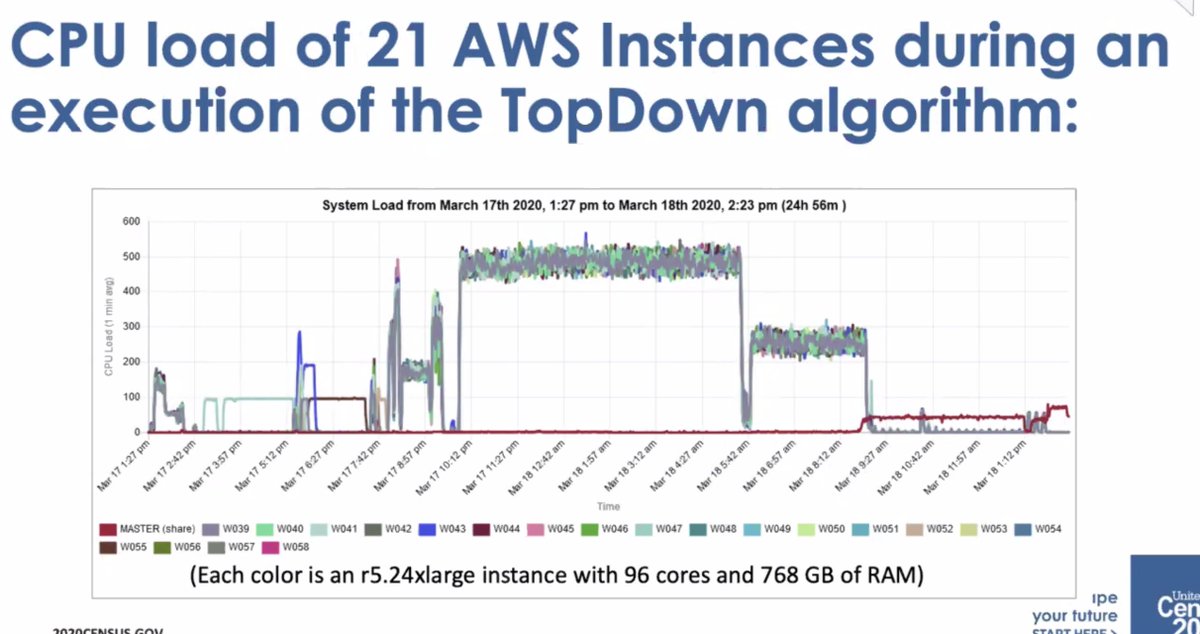

* required a lot more computing power

* initial implementation was by Dan Kiefer, who took a sabbatical

* expanded team to with Simson and others

* 2018 end to end test

* then got to move to AWS Elastic compute... but the monitoring wasn't good enough and had to create their own dashboard to track execution

* it wasn't a small amount of compute

* ... it wasn't well-received by the data users who thought there was too much error

If you avoid that, you might add bias to the data. How to avoid that? Let some data users get access to the measurement files [I don't follow]

More from Lea Kissner

More from Tech

Interestingly, this thread below has been written by that.

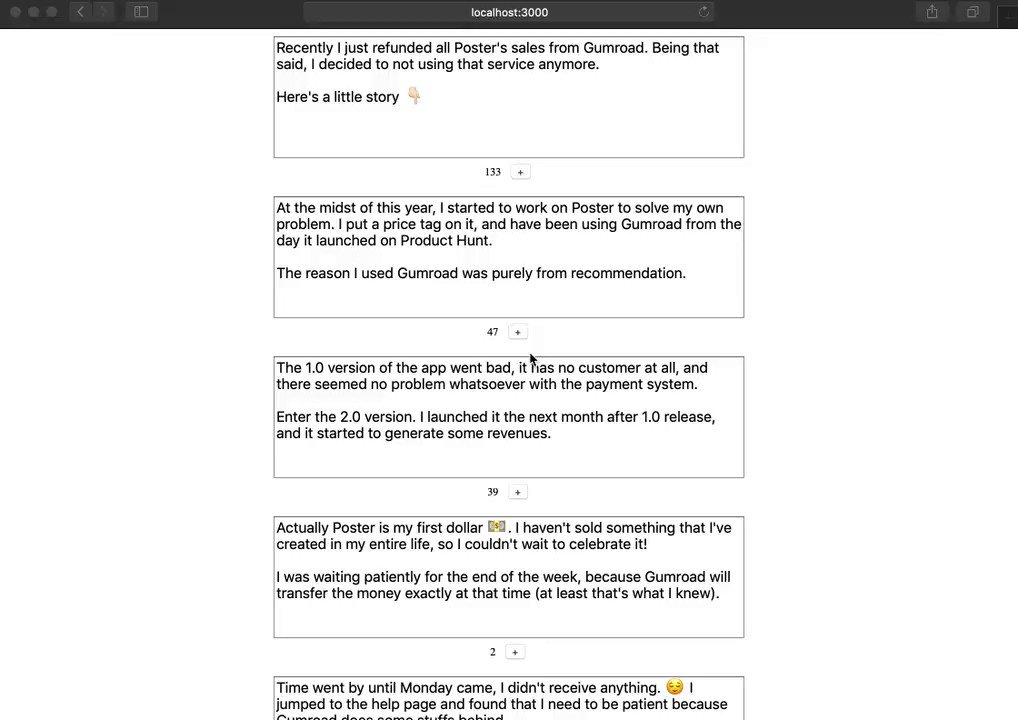

Let me show you how it looks like. 👇🏻

Recently I just refunded all Poster's sales from Gumroad. Being that said, I decided to not using that service anymore.

— Wilbert Liu \U0001f468\U0001f3fb\u200d\U0001f3a8 (@wilbertliu) November 19, 2018

Here's a little story \U0001f447\U0001f3fb

When you see localhost up there, you should know that it's truly an experiment! 😀

It's a dead-simple thread writer that will post a series of tweets a.k.a tweetstorm. ⚡️

I've been personally wanting it myself since few months ago, but neglected it intentionally to make sure it's something that I genuinely need.

So why is that important for me? 🙂

I've been a believer of a story. I tell stories all the time, whether it's in the real world or online like this. Our society has moved by that.

If you're interested by stories that move us, read Sapiens!

One of the stories that I've told was from the launch of Poster.

It's been launched multiple times this year, and Twitter has been my go-to place to tell the world about that.

Here comes my frustration.. 😤

You May Also Like

As a dean of a major academic institution, I could not have said this. But I will now. Requiring such statements in applications for appointments and promotions is an affront to academic freedom, and diminishes the true value of diversity, equity of inclusion by trivializing it. https://t.co/NfcI5VLODi

— Jeffrey Flier (@jflier) November 10, 2018

We know that elite institutions like the one Flier was in (partial) charge of rely on irrelevant status markers like private school education, whiteness, legacy, and ability to charm an old white guy at an interview.

Harvard's discriminatory policies are becoming increasingly well known, across the political spectrum (see, e.g., the recent lawsuit on discrimination against East Asian applications.)

It's refreshing to hear a senior administrator admits to personally opposing policies that attempt to remedy these basic flaws. These are flaws that harm his institution's ability to do cutting-edge research and to serve the public.

Harvard is being eclipsed by institutions that have different ideas about how to run a 21st Century institution. Stanford, for one; the UC system; the "public Ivys".

It was Ved Vyas who edited the eighteen thousand shlokas of Bhagwat. This book destroys all your sins. It has twelve parts which are like kalpvraksh.

In the first skandh, the importance of Vedvyas

and characters of Pandavas are described by the dialogues between Suutji and Shaunakji. Then there is the story of Parikshit.

Next there is a Brahm Narad dialogue describing the avtaar of Bhagwan. Then the characteristics of Puraan are mentioned.

It also discusses the evolution of universe.( https://t.co/2aK1AZSC79 )

Next is the portrayal of Vidur and his dialogue with Maitreyji. Then there is a mention of Creation of universe by Brahma and the preachings of Sankhya by Kapil Muni.

HOW LIFE EVOLVED IN THIS UNIVERSE AS PER OUR SCRIPTURES.

— Anshul Pandey (@Anshulspiritual) August 29, 2020

Well maximum of Living being are the Vansaj of Rishi Kashyap. I have tried to give stories from different-different Puran. So lets start.... pic.twitter.com/MrrTS4xORk

In the next section we find the portrayal of Sati, Dhruv, Pruthu, and the story of ancient King, Bahirshi.

In the next section we find the character of King Priyavrat and his sons, different types of loks in this universe, and description of Narak. ( https://t.co/gmDTkLktKS )

Thread on NARK(HELL) / \u0928\u0930\u094d\u0915

— Anshul Pandey (@Anshulspiritual) August 11, 2020

Well today i will take you to a journey where nobody wants to go i.e Nark. Hence beware of doing Adharma/Evil things. There are various mentions in Puranas about Nark, But my Thread is only as per Bhagwat puran(SS attached in below Thread)

1/8 pic.twitter.com/raHYWtB53Q

In the sixth part we find the portrayal of Ajaamil ( https://t.co/LdVSSNspa2 ), Daksh and the birth of Marudgans( https://t.co/tecNidVckj )

In the seventh section we find the story of Prahlad and the description of Varnashram dharma. This section is based on karma vaasna.

#THREAD

— Anshul Pandey (@Anshulspiritual) August 12, 2020

WHY PARENTS CHOOSE RELIGIOUS OR PARAMATMA'S NAMES FOR THEIR CHILDREN AND WHICH ARE THE EASIEST WAY TO WASH AWAY YOUR SINS.

Yesterday I had described the types of Naraka's and the Sin or Adharma for a person to be there.

1/8 pic.twitter.com/XjPB2hfnUC