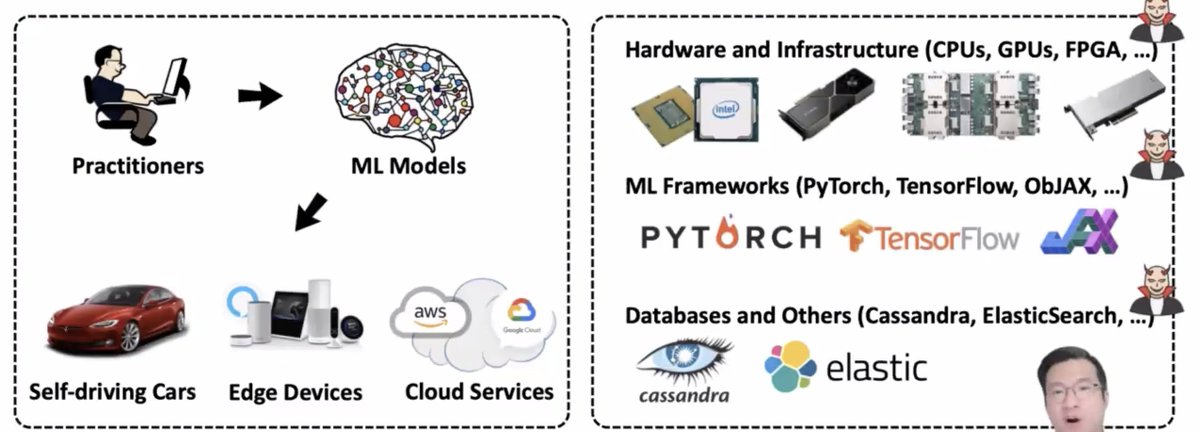

Next up at #enigma2021, Sanghyun Hong will be speaking about "A SOUND MIND IN A VULNERABLE BODY: PRACTICAL HARDWARE ATTACKS ON DEEP LEARNING"

(Hint: speaker is on the

* looks at the robustness in an isolated manner

* doesn't look at the whole ecosystem and how the model is used -- ML models are running in real hardware with real software which has real vulns!

e.g. fault injection attacks, side-channel attacks

* co-location of VMs from different users

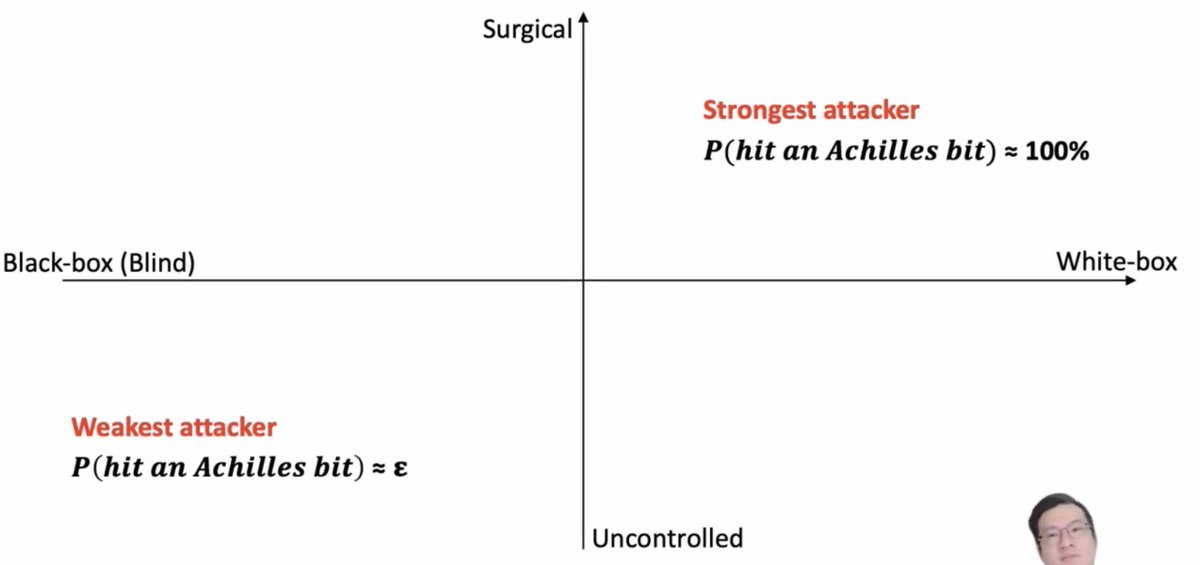

* weak attackers with less subtle control

The cloud providers try to secure things, e.g. protections against Rowhammer

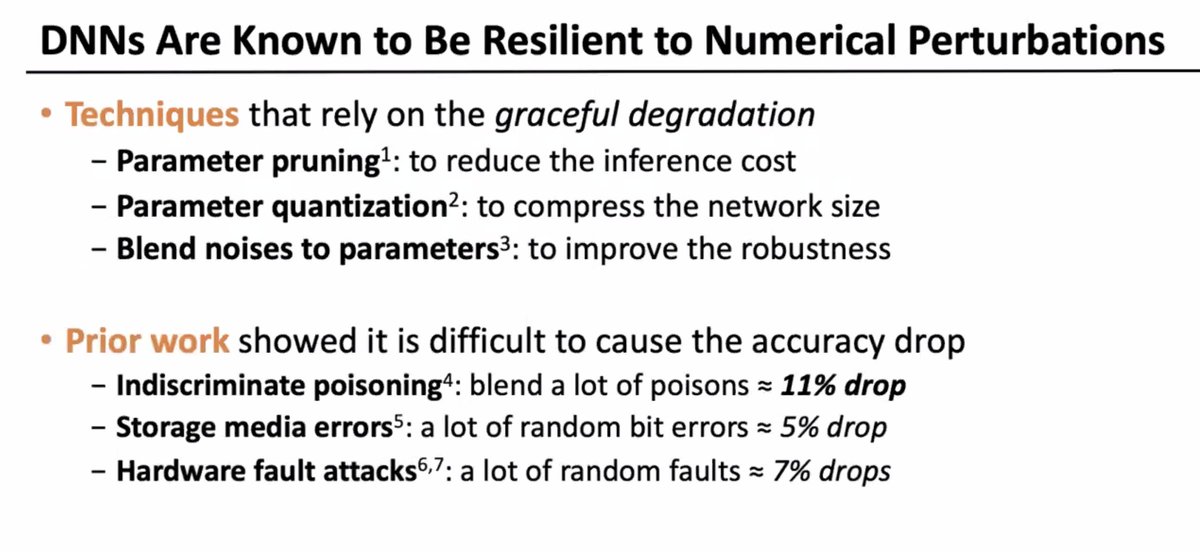

... BUT this focuses on the average or best case, not the worst cast!

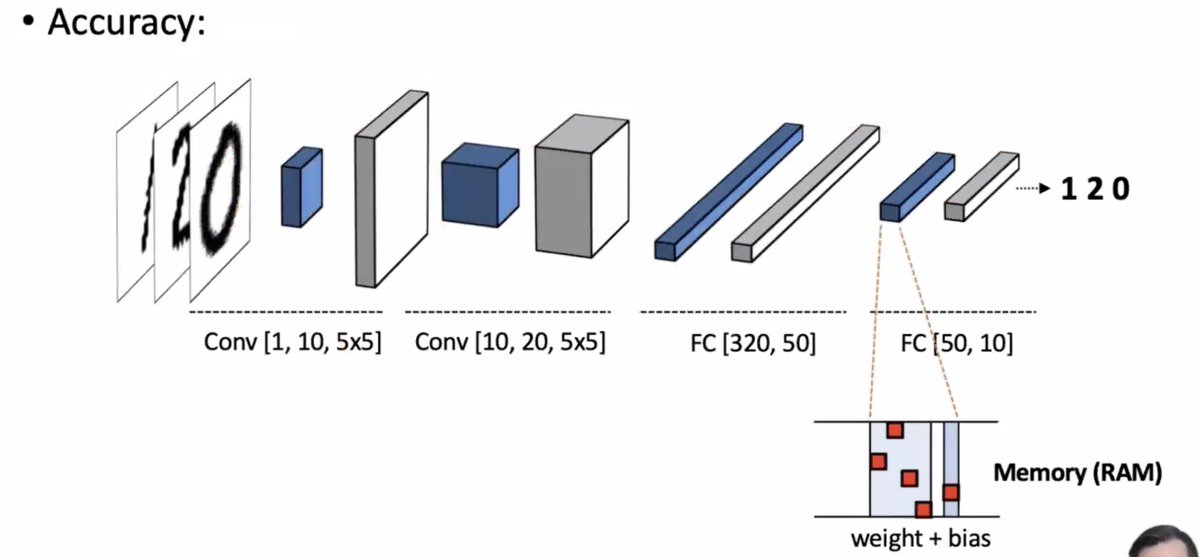

* negligible effect on the average case accuracy

* but flipping one bit can make significant amount of damage for particular queries

How much damage can a single bit flip cause?

Some strong attackers might be able to hit an "achilles" bit (one that's really going to mess with the model), but weaker attackers are going to hit bits more randomly.

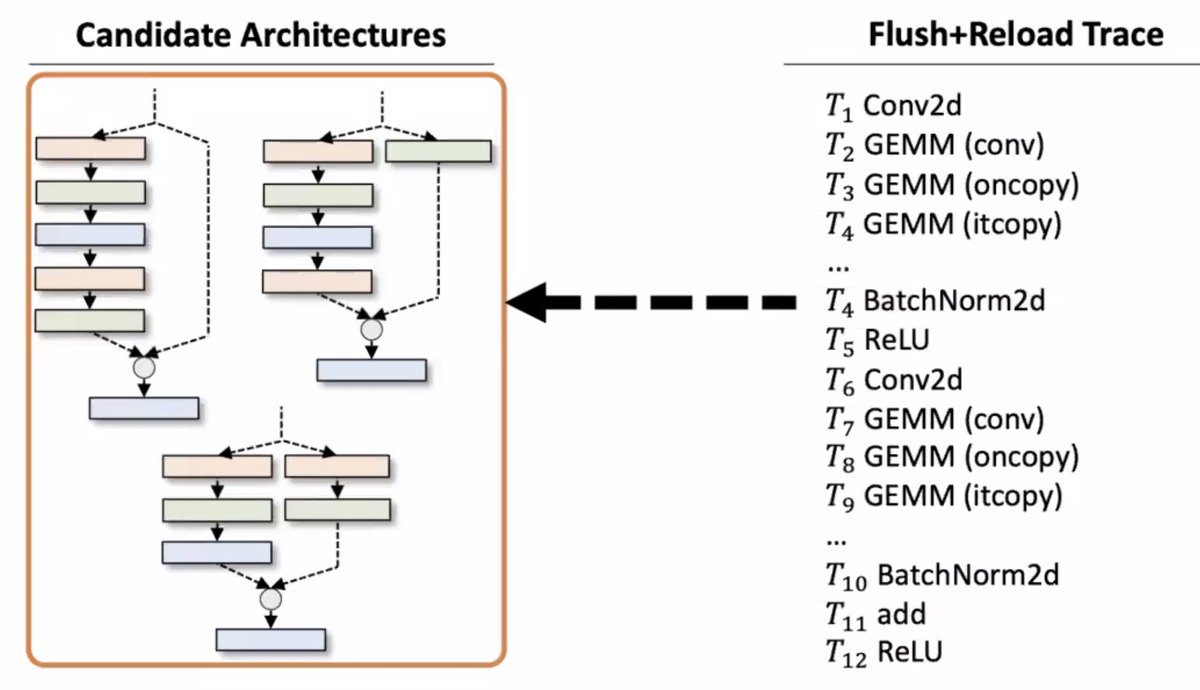

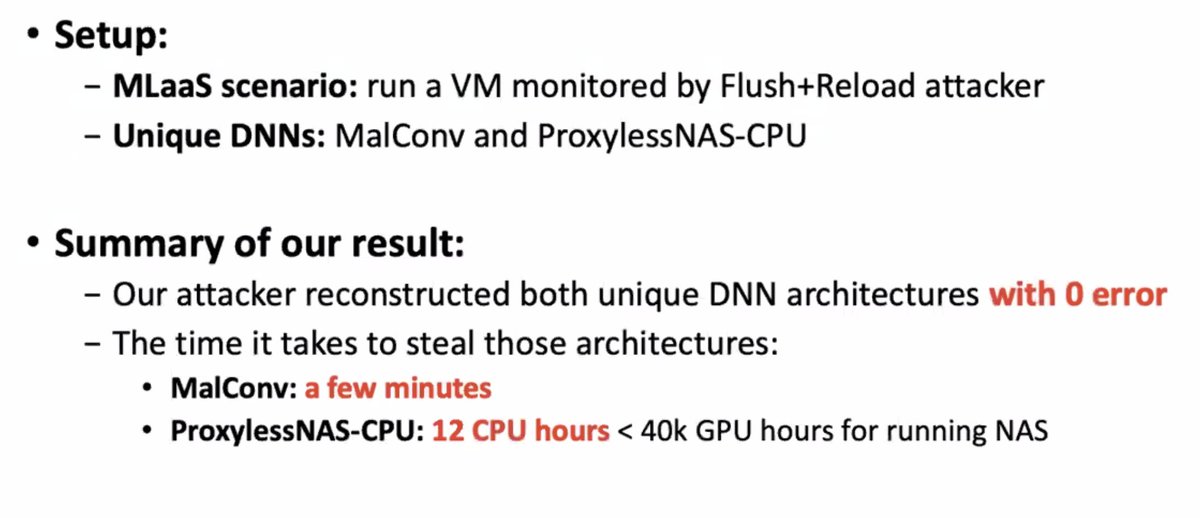

The attacker might want to get their hands on fancy DNNs which are considered trade secrets and proprietary to their creators. They're expensive to make! They need good training data! People want to protect them!

Does this work? Apparently so: they tried it out using a cache side-channel attack and got back the architectures of the fancy DNN back.

More from Lea Kissner

More from Science

https://t.co/hXlo8qgkD0

Look like that they got a classical case of PCR Cross-Contamination.

They had 2 fabricated samples (SRX9714436 and SRX9714921) on the same PCR run. Alongside with Lung07. They did not perform metagenomic sequencing on the “feces” and they did not get

A positive oral or anal swab from anywhere in their sampling. Feces came from anus and if these were positive the anal swabs must also be positive. Clearly it got there after the NA have been extracted and were from the very low-level degraded RNA which were mutagenized from

The Taq. https://t.co/yKXCgiT29w to see SRX9714921 and SRX9714436.

Human+Mouse in the positive SRA, human in both of them. Seeing human+mouse in identical proportions across 3 different sequencers (PRJNA573298, A22, SEX9714436) are pretty straight indication that the originals

Were already contaminated with Human and mouse from the very beginning, and that this contamination is due to dishonesty in the sample handling process which prescribe a spiking of samples in ACE2-HEK293T/A549, VERO E6 and Human lung xenograft mouse.

The “lineages” they claimed to have found aren’t mutational lineages at all—all the mutations they see on these sequences were unique to that specific sequence, and are the result of RNA degradation and from the Taq polymerase errors accumulated from the nested PCR process

Look like that they got a classical case of PCR Cross-Contamination.

They had 2 fabricated samples (SRX9714436 and SRX9714921) on the same PCR run. Alongside with Lung07. They did not perform metagenomic sequencing on the “feces” and they did not get

A positive oral or anal swab from anywhere in their sampling. Feces came from anus and if these were positive the anal swabs must also be positive. Clearly it got there after the NA have been extracted and were from the very low-level degraded RNA which were mutagenized from

The Taq. https://t.co/yKXCgiT29w to see SRX9714921 and SRX9714436.

Human+Mouse in the positive SRA, human in both of them. Seeing human+mouse in identical proportions across 3 different sequencers (PRJNA573298, A22, SEX9714436) are pretty straight indication that the originals

Were already contaminated with Human and mouse from the very beginning, and that this contamination is due to dishonesty in the sample handling process which prescribe a spiking of samples in ACE2-HEK293T/A549, VERO E6 and Human lung xenograft mouse.

The “lineages” they claimed to have found aren’t mutational lineages at all—all the mutations they see on these sequences were unique to that specific sequence, and are the result of RNA degradation and from the Taq polymerase errors accumulated from the nested PCR process

The endangered whales must contend with up to 1,000 boats moving daily through an important feeding area in the eastern South Pacific, according to research published in the scientific journal Nature.@WeDontHaveTime

#ForNature @JohnKerry

#ForNature @JohnKerry

Blue whales threatened by ship collisions in busy Patagonia waters

— James Mitchell \u24cb\U0001f42c (@MesMitch) February 1, 2021

Endangered giants face potentially fatal encounters with the 1,000 daily fishing vessels moving through main feeding area off Chile, scientists warn\U0001f43b\u200d\u2744\ufe0f@WeDontHaveTime

#ForNature @JohnKerry

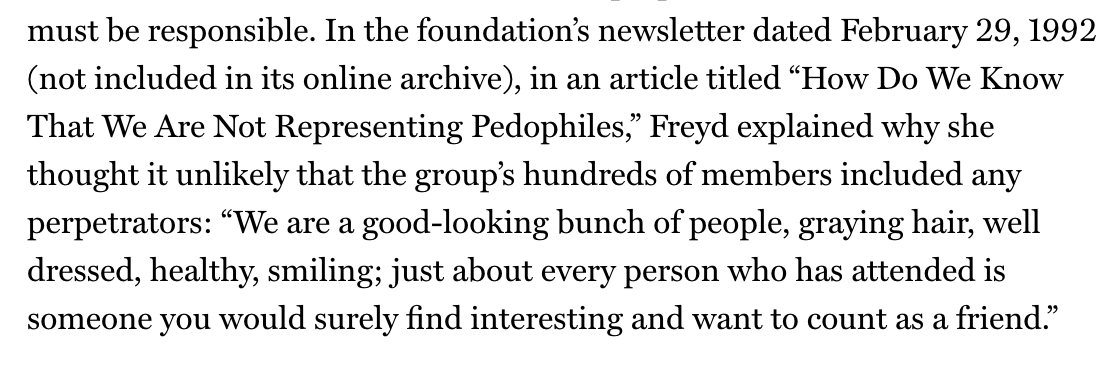

So it turns out that an organization I thought was doing good work, the False Memory Syndrome Foundation (associated with Center for Inquiry, James Randi, and Martin Gardner) was actually caping for pedophiles. Uhhhh oops?

Since this, bizarrely, turned out to be one of my longest videos ever (??) here's a quick thread to sum it up for those of you like myself with short attention spans. 1/10

In the '90s the False Memory Syndrome Foundation was founded to call attention to the problem of adults suddenly "remembering" child abuse that never actually happened, often under hypnosis. Skeptics like James Randi & Martin Gardner joined their board. 2/10

A new article reveals that the FMSF was founded by parents who had been credibly and PRIVATELY accused of molestation by their now-adult daughter. They publicized the accusation, destroyed the daughter's reputation, and started the foundation. 3/10

The FMSF assumed any accused pedo who joined was innocent, saying "We are a good-looking bunch of people, graying hair, well dressed, healthy, smiling; just about every person who has attended is someone you would surely find interesting and want to count as a friend" 😬 4/10

I was Wrong about False Memories: Satanic Panic, Pedophiles, Ted Bundy, and the Lost in the Mall Studies https://t.co/6XKTfGOqwl

— skepchicks (@skepchicks) January 15, 2021

Since this, bizarrely, turned out to be one of my longest videos ever (??) here's a quick thread to sum it up for those of you like myself with short attention spans. 1/10

In the '90s the False Memory Syndrome Foundation was founded to call attention to the problem of adults suddenly "remembering" child abuse that never actually happened, often under hypnosis. Skeptics like James Randi & Martin Gardner joined their board. 2/10

A new article reveals that the FMSF was founded by parents who had been credibly and PRIVATELY accused of molestation by their now-adult daughter. They publicized the accusation, destroyed the daughter's reputation, and started the foundation. 3/10

The FMSF assumed any accused pedo who joined was innocent, saying "We are a good-looking bunch of people, graying hair, well dressed, healthy, smiling; just about every person who has attended is someone you would surely find interesting and want to count as a friend" 😬 4/10