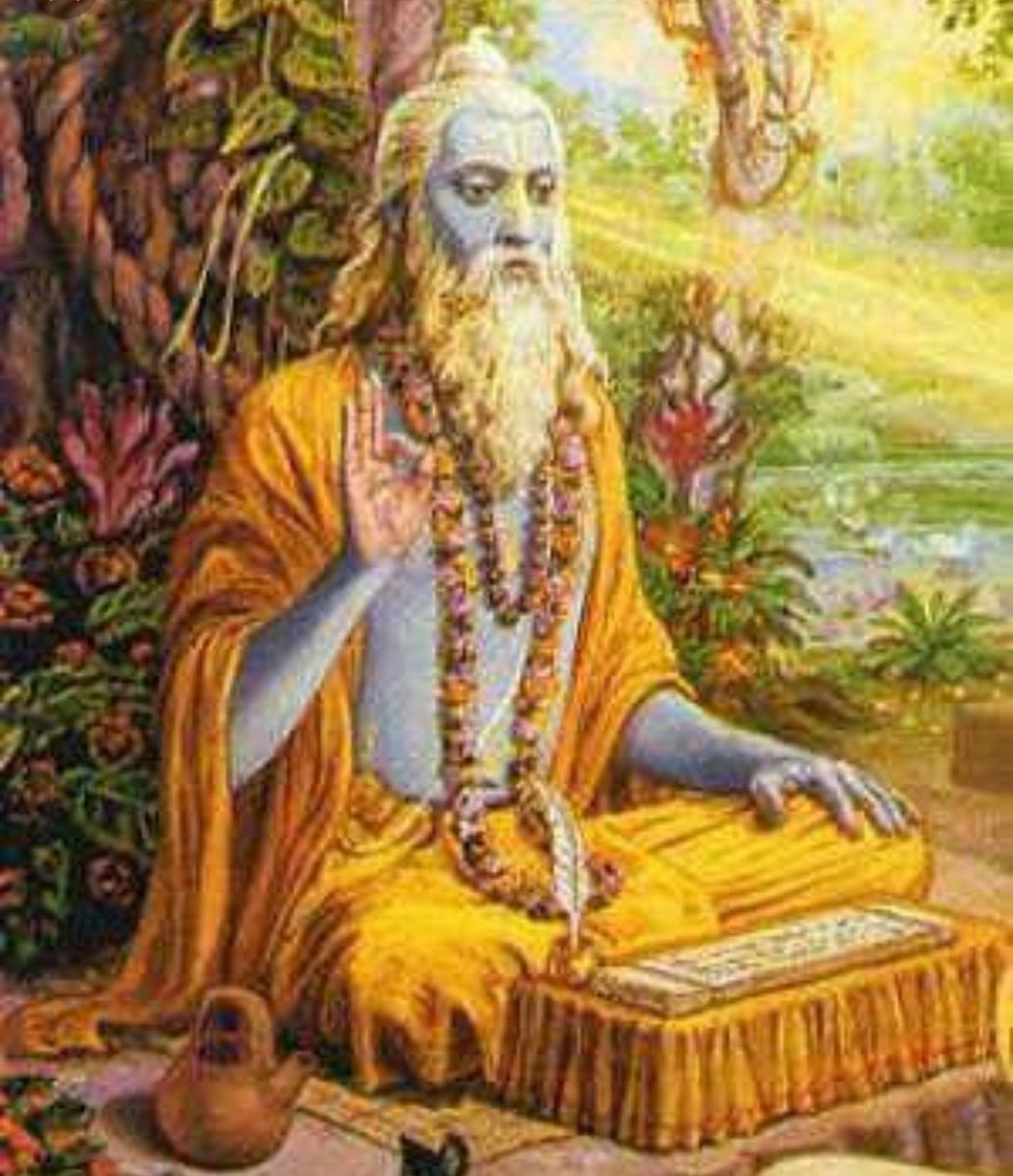

Birth of Chitragupta - Brahma Dev gave Yama Dharma, the work of punishing or rewarding the souls of people, based on their actions in life.

#ChitraPournami -Today celebrated on full-moon day with Chithirai Nakshatra in Tamil month of Chithirai(April/May)

There are many legend stories related. #Thread

Chitragupta,Yama's assistant birthday.

Indra Dev prayed to Shiva Lingam in Kadamba vanam with Golden lotus-Madurai

Birth of Chitragupta - Brahma Dev gave Yama Dharma, the work of punishing or rewarding the souls of people, based on their actions in life.

Once Indra and Indrani did severe penance to please Bhagwan Shiva for getting child boon.Later,Bhagwan Shiva was happy with their prayers,he ordered Chitragupta to be born as a child to Indrani.

Chitragupta after

Indrani was happy to have Kamadhenu at her place.

Chitragupta was born in the palace of Indra -Indrani & Indralok was celebrated the birth of the child.

This happened on Chitra pournami day.He said to have worshiped with Golden lotus.

To know about why Indra got Brahmahathi https://t.co/GGfLnDL8ad read #STORY 👇

https://t.co/WQ81Vk2YVo

More from SK

#sculpture #story

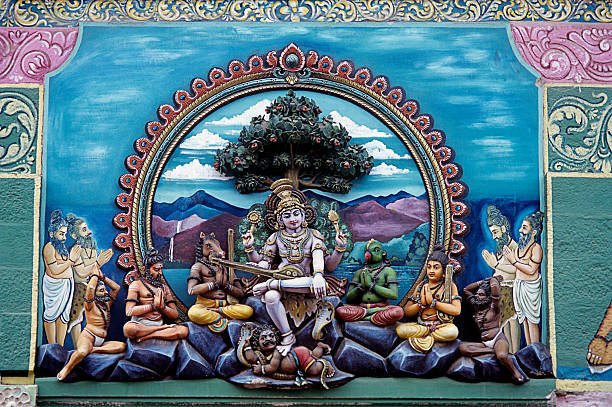

Kirata-Arjuniyam episode in Vana Parva - of #Mahabharata serves as a proof of the persistence & focus of Arjuna & the playfulness,generosity of Bhagawan Shiva.Also Shiva wished to test Arjuna,so appears as Kirata/hunter along with Devi as a huntress.

📸 1 - Sculpture in the gopuram of Kapaleeshwarar Temple, Chennai,TN

📸 2 - A panel in Beautiful Amruteshwara Temple, Amruthapura,Karnataka

Sculptures depicts Arjuna doing tapas,Killing of boar,battle between Kirata(Shiva) &Arjun after which Arjuna obtained Pashupatastra.

#story #Thread -In Vana Parva,Arjun sets out to attain the Pashupatastra from Shiva.He was doing tapas,was distracted as he saw a wild boar(asura) rushing towards him to slay him.Arjun took his Gandiva & pointed it at the boar.A hunter(kirata)&his wife were present at that spot.

Both #Arjun & Kirata shot an arrow at the boar & it fell dead.The boar revealed its true form,which was a rakshasa named https://t.co/Ptq7Q6FsmQ argument erupted between hunter & Arjuna as to who shot the boar first.Arjun showered arrows at the hunter & a fierce battle started.

📸 -Sculpture depicting the battle of Kirata & Arjuna at Kailasanathar Temple, Kanchipuram TN

Arjuna kept showering arrows towards kirata.Awestruck as his arrows had no effect on the hunter,Arjuna stood still.He soon realised that this must be none other than - Shiva himself.

Kirata-Arjuniyam episode in Vana Parva - of #Mahabharata serves as a proof of the persistence & focus of Arjuna & the playfulness,generosity of Bhagawan Shiva.Also Shiva wished to test Arjuna,so appears as Kirata/hunter along with Devi as a huntress.

📸 1 - Sculpture in the gopuram of Kapaleeshwarar Temple, Chennai,TN

📸 2 - A panel in Beautiful Amruteshwara Temple, Amruthapura,Karnataka

Sculptures depicts Arjuna doing tapas,Killing of boar,battle between Kirata(Shiva) &Arjun after which Arjuna obtained Pashupatastra.

#story #Thread -In Vana Parva,Arjun sets out to attain the Pashupatastra from Shiva.He was doing tapas,was distracted as he saw a wild boar(asura) rushing towards him to slay him.Arjun took his Gandiva & pointed it at the boar.A hunter(kirata)&his wife were present at that spot.

Both #Arjun & Kirata shot an arrow at the boar & it fell dead.The boar revealed its true form,which was a rakshasa named https://t.co/Ptq7Q6FsmQ argument erupted between hunter & Arjuna as to who shot the boar first.Arjun showered arrows at the hunter & a fierce battle started.

📸 -Sculpture depicting the battle of Kirata & Arjuna at Kailasanathar Temple, Kanchipuram TN

Arjuna kept showering arrows towards kirata.Awestruck as his arrows had no effect on the hunter,Arjuna stood still.He soon realised that this must be none other than - Shiva himself.

Margatha Natarajar murthi - Uthirakosamangai temple near Ramanathapuram,TN

#ArudraDarisanam

Unique Natarajar made of emerlad is abt 6 feet tall.

It is always covered with sandal paste.Only on Thriuvadhirai Star in month Margazhi-Nataraja can be worshipped without sandal paste.

After removing the sandal paste,day long rituals & various abhishekam will be https://t.co/e1Ye8DrNWb day Maragatha Nataraja sannandhi will be closed after anointing the murthi with fresh sandal paste.Maragatha Natarajar is covered with sandal paste throughout the year

as Emerald has scientific property of its molecules getting disturbed when exposed to light/water/sound.This is an ancient Shiva temple considered to be 3000 years old -believed to be where Bhagwan Shiva gave Veda gyaana to Parvati Devi.This temple has some stunning sculptures.

#ArudraDarisanam

Unique Natarajar made of emerlad is abt 6 feet tall.

It is always covered with sandal paste.Only on Thriuvadhirai Star in month Margazhi-Nataraja can be worshipped without sandal paste.

After removing the sandal paste,day long rituals & various abhishekam will be https://t.co/e1Ye8DrNWb day Maragatha Nataraja sannandhi will be closed after anointing the murthi with fresh sandal paste.Maragatha Natarajar is covered with sandal paste throughout the year

as Emerald has scientific property of its molecules getting disturbed when exposed to light/water/sound.This is an ancient Shiva temple considered to be 3000 years old -believed to be where Bhagwan Shiva gave Veda gyaana to Parvati Devi.This temple has some stunning sculptures.

More from All

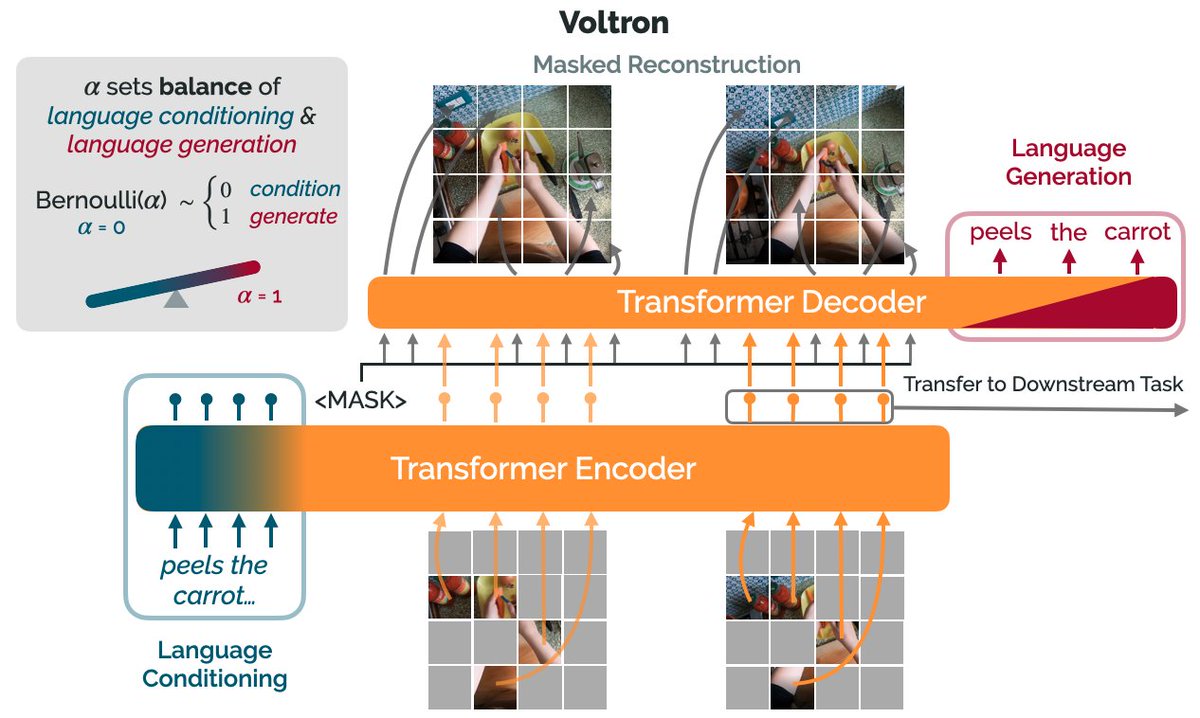

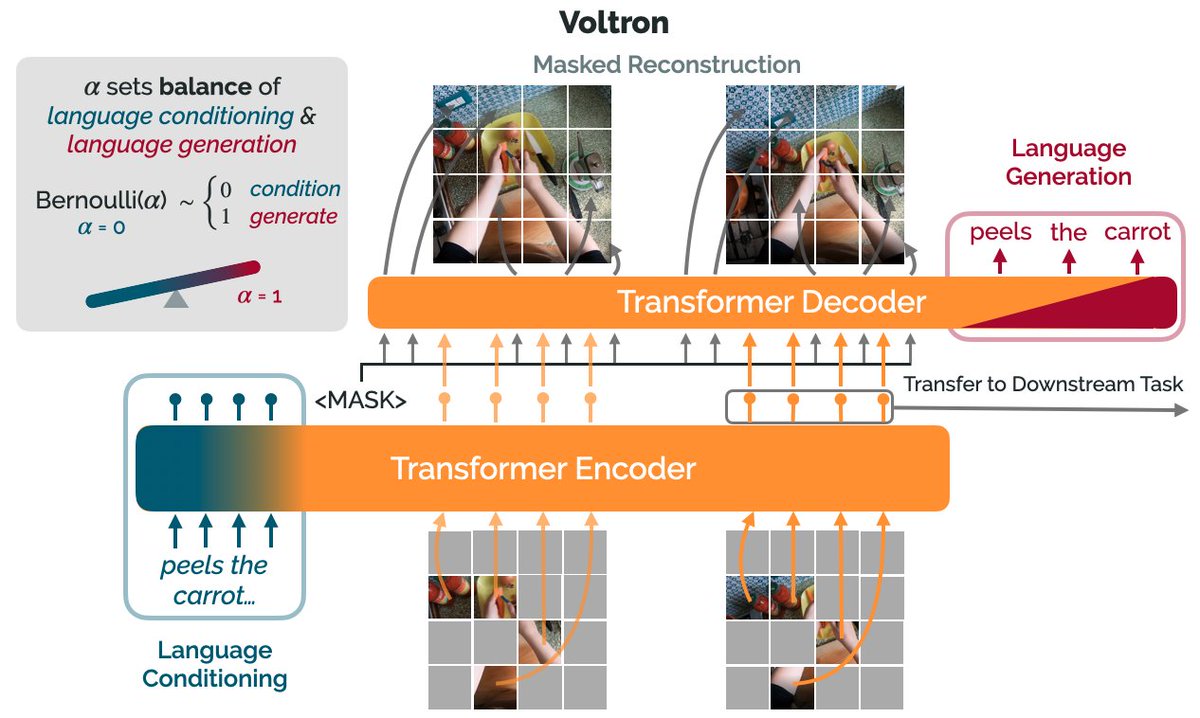

How can we use language supervision to learn better visual representations for robotics?

Introducing Voltron: Language-Driven Representation Learning for Robotics!

Paper: https://t.co/gIsRPtSjKz

Models: https://t.co/NOB3cpATYG

Evaluation: https://t.co/aOzQu95J8z

🧵👇(1 / 12)

Videos of humans performing everyday tasks (Something-Something-v2, Ego4D) offer a rich and diverse resource for learning representations for robotic manipulation.

Yet, an underused part of these datasets are the rich, natural language annotations accompanying each video. (2/12)

The Voltron framework offers a simple way to use language supervision to shape representation learning, building off of prior work in representations for robotics like MVP (https://t.co/Pb0mk9hb4i) and R3M (https://t.co/o2Fkc3fP0e).

The secret is *balance* (3/12)

Starting with a masked autoencoder over frames from these video clips, make a choice:

1) Condition on language and improve our ability to reconstruct the scene.

2) Generate language given the visual representation and improve our ability to describe what's happening. (4/12)

By trading off *conditioning* and *generation* we show that we can learn 1) better representations than prior methods, and 2) explicitly shape the balance of low and high-level features captured.

Why is the ability to shape this balance important? (5/12)

Introducing Voltron: Language-Driven Representation Learning for Robotics!

Paper: https://t.co/gIsRPtSjKz

Models: https://t.co/NOB3cpATYG

Evaluation: https://t.co/aOzQu95J8z

🧵👇(1 / 12)

Videos of humans performing everyday tasks (Something-Something-v2, Ego4D) offer a rich and diverse resource for learning representations for robotic manipulation.

Yet, an underused part of these datasets are the rich, natural language annotations accompanying each video. (2/12)

The Voltron framework offers a simple way to use language supervision to shape representation learning, building off of prior work in representations for robotics like MVP (https://t.co/Pb0mk9hb4i) and R3M (https://t.co/o2Fkc3fP0e).

The secret is *balance* (3/12)

Starting with a masked autoencoder over frames from these video clips, make a choice:

1) Condition on language and improve our ability to reconstruct the scene.

2) Generate language given the visual representation and improve our ability to describe what's happening. (4/12)

By trading off *conditioning* and *generation* we show that we can learn 1) better representations than prior methods, and 2) explicitly shape the balance of low and high-level features captured.

Why is the ability to shape this balance important? (5/12)