Or it's important to them that others believe that they're actually depressed, not "just sad."

I know some people who seem (to me) more concerned with receiving VALIDATION for their mental health issues than solving them.

They seem to care most about other people BELIEVING their problems are real.

I'm curious about this.

Or it's important to them that others believe that they're actually depressed, not "just sad."

If no one cuts you slack because of your mental health stuff, maybe you're in a really bad place, so you need people to believe you.

https://t.co/07v275i2gt

While she said some things I agree with, I rolled by eyes at her use of the word "trauma" because it seemed to me a transparent way to show political support to folks who's trauma narratives are important to...

I guess there's a general thing here: the more you can claim to have been harmed, the more likely people are to rally to support you.

I was once in a social dynamic where I would construct things such that I was visibly sad or put upon, because that was the only way I knew to revive (a type of) affection.

They need it, and they need it to be believed, because that's the only way they can feel loved and supported.

(Though that begs the question of WHY people have that need.)

The establishment certifies that this problem is HARD, you're not expected to just be able to trivially solve it. Which gives one protection against others' claims (or even demands) that you can and should.

Do you have a story for what's going on?

More from Eli Tyre

I started by simply stating that I thought that the arguments that I had heard so far don't hold up, and seeing if anyone was interested in going into it in depth with

CritRats!

— Eli Tyre (@EpistemicHope) December 26, 2020

I think AI risk is a real existential concern, and I claim that the CritRat counterarguments that I've heard so far (keywords: universality, person, moral knowledge, education, etc.) don't hold up.

Anyone want to hash this out with me?https://t.co/Sdm4SSfQZv

So far, a few people have engaged pretty extensively with me, for instance, scheduling video calls to talk about some of the stuff, or long private chats.

(Links to some of those that are public at the bottom of the thread.)

But in addition to that, there has been a much more sprawling conversation happening on twitter, involving a much larger number of people.

Having talked to a number of people, I then offered a paraphrase of the basic counter that I was hearing from people of the Crit Rat persuasion.

ELI'S PARAPHRASE OF THE CRIT RAT STORY ABOUT AGI AND AI RISK

— Eli Tyre (@EpistemicHope) January 5, 2021

There are two things that you might call "AI".

The first is non-general AI, which is a program that follows some pre-set algorithm to solve a pre-set problem. This includes modern ML.

I think AI risk is a real existential concern, and I claim that the CritRat counterarguments that I've heard so far (keywords: universality, person, moral knowledge, education, etc.) don't hold up.

Anyone want to hash this out with

In general, I am super up for short (1 to 10 hour) adversarial collaborations.

— Eli Tyre (@EpistemicHope) December 23, 2020

If you think I'm wrong about something, and want to dig into the topic with me to find out what's up / prove me wrong, DM me.

For instance, while I heartily agree with lots of what is said in this video, I don't think that the conclusion about how to prevent (the bad kind of) human extinction, with regard to AGI, follows.

There are a number of reasons to think that AGI will be more dangerous than most people are, despite both people and AGIs being qualitatively the same sort of thing (explanatory knowledge-creating entities).

And, I maintain, that because of practical/quantitative (not fundamental/qualitative) differences, the development of AGI / TAI is very likely to destroy the world, by default.

(I'm not clear on exactly how much disagreement there is. In the video above, Deutsch says "Building an AGI with perverse emotions that lead it to immoral actions would be a crime."

More from Health

You May Also Like

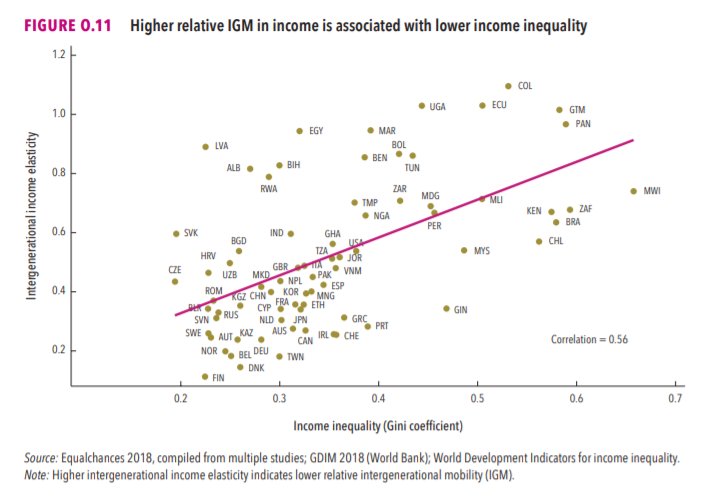

This New York Times feature shows China with a Gini Index of less than 30, which would make it more equal than Canada, France, or the Netherlands. https://t.co/g3Sv6DZTDE

That's weird. Income inequality in China is legendary.

Let's check this number.

2/The New York Times cites the World Bank's recent report, "Fair Progress? Economic Mobility across Generations Around the World".

The report is available here:

3/The World Bank report has a graph in which it appears to show the same value for China's Gini - under 0.3.

The graph cites the World Development Indicators as its source for the income inequality data.

4/The World Development Indicators are available at the World Bank's website.

Here's the Gini index: https://t.co/MvylQzpX6A

It looks as if the latest estimate for China's Gini is 42.2.

That estimate is from 2012.

5/A Gini of 42.2 would put China in the same neighborhood as the U.S., whose Gini was estimated at 41 in 2013.

I can't find the <30 number anywhere. The only other estimate in the tables for China is from 2008, when it was estimated at 42.8.

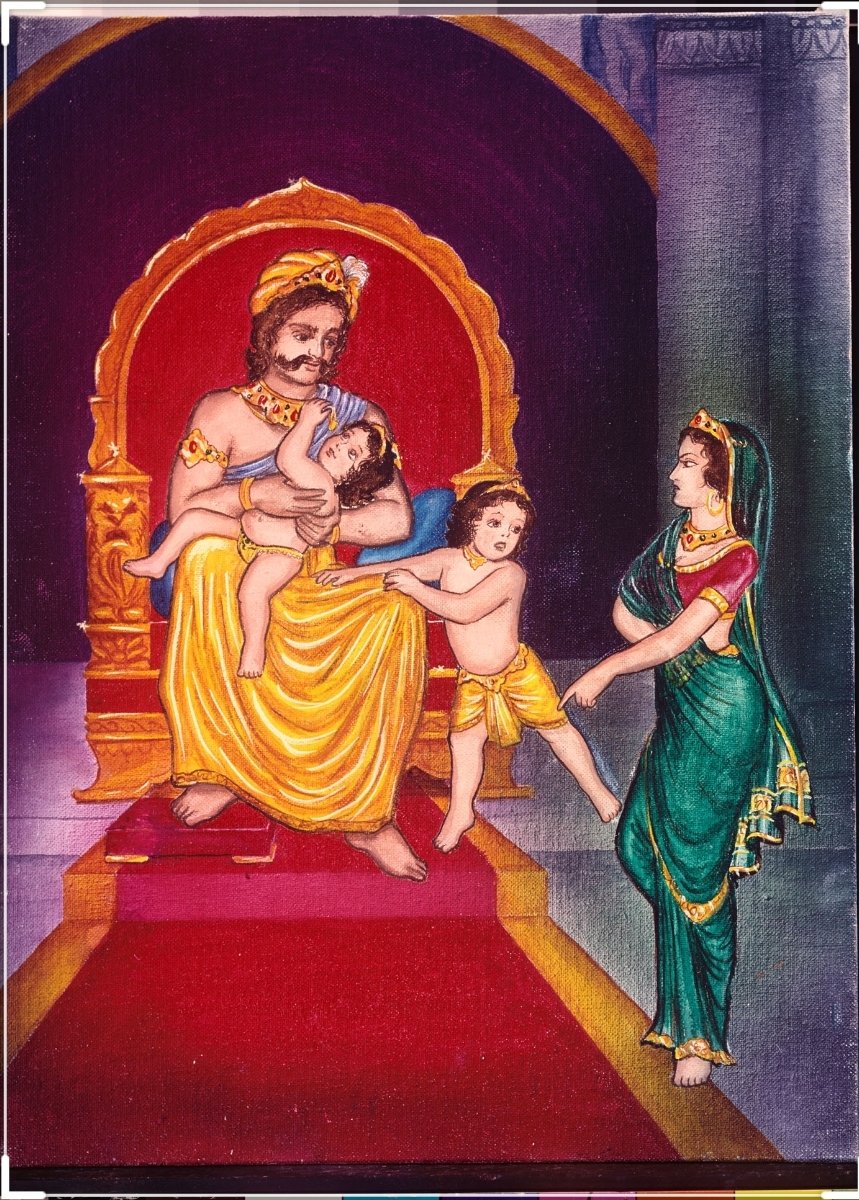

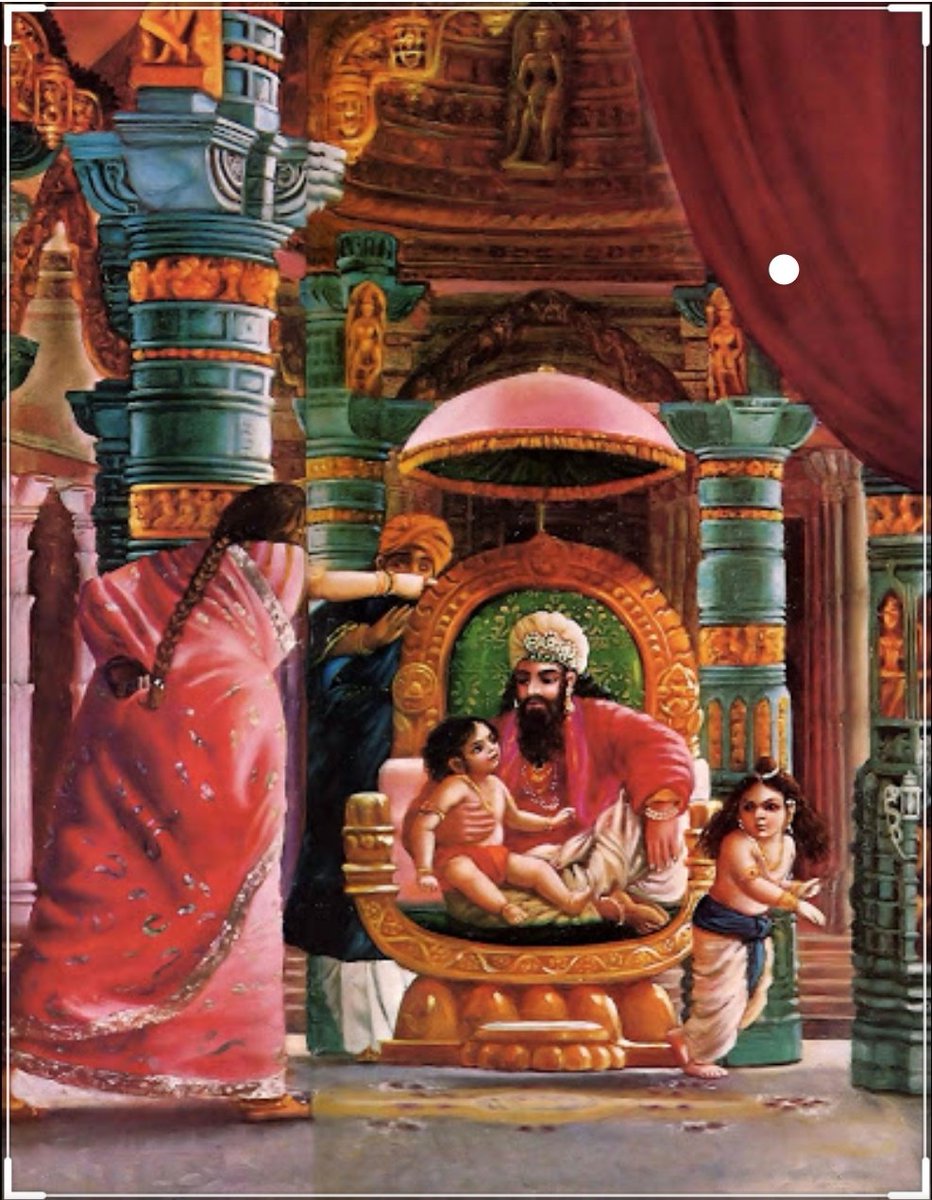

Once upon a time there was a Raja named Uttānapāda born of Svayambhuva Manu,1st man on earth.He had 2 beautiful wives - Suniti & Suruchi & two sons were born of them Dhruva & Uttama respectively.

#talesofkrishna https://t.co/E85MTPkF9W

Prabhu says i reside in the heart of my bhakt.

— Right Singh (@rightwingchora) December 21, 2020

Guess the event. pic.twitter.com/yFUmbfe5KL

Now Suniti was the daughter of a tribal chief while Suruchi was the daughter of a rich king. Hence Suruchi was always favored the most by Raja while Suniti was ignored. But while Suniti was gentle & kind hearted by nature Suruchi was venomous inside.

#KrishnaLeela

The story is of a time when ideally the eldest son of the king becomes the heir to the throne. Hence the sinhasan of the Raja belonged to Dhruva.This is why Suruchi who was the 2nd wife nourished poison in her heart for Dhruva as she knew her son will never get the throne.

One day when Dhruva was just 5 years old he went on to sit on his father's lap. Suruchi, the jealous queen, got enraged and shoved him away from Raja as she never wanted Raja to shower Dhruva with his fatherly affection.

Dhruva protested questioning his step mother "why can't i sit on my own father's lap?" A furious Suruchi berated him saying "only God can allow him that privilege. Go ask him"