The Dutch Protocol is the basis on which the entire puberty blocking experiment rests.

For this experiment they used two scales, a boy scale and a girl scale and these two scales asked very different questions. 1/4

Before intervention, they assessed children using a sex-congruent GD scale.

— Gender: A Wider Lens Podcast (@widerlenspod) March 11, 2022

Post-intervention they switch to the opposite sex scale.

Does this accurately assess changes in the participants\u2019 gender dysphoria?https://t.co/YSiUQpfMCZ @RethinkIME @stellaomalley3 @genspect pic.twitter.com/0s4q5wV272

More from All

@franciscodeasis https://t.co/OuQaBRFPu7

Unfortunately the "This work includes the identification of viral sequences in bat samples, and has resulted in the isolation of three bat SARS-related coronaviruses that are now used as reagents to test therapeutics and vaccines." were BEFORE the

chimeric infectious clone grants were there.https://t.co/DAArwFkz6v is in 2017, Rs4231.

https://t.co/UgXygDjYbW is in 2016, RsSHC014 and RsWIV16.

https://t.co/krO69CsJ94 is in 2013, RsWIV1. notice that this is before the beginning of the project

starting in 2016. Also remember that they told about only 3 isolates/live viruses. RsSHC014 is a live infectious clone that is just as alive as those other "Isolates".

P.D. somehow is able to use funds that he have yet recieved yet, and send results and sequences from late 2019 back in time into 2015,2013 and 2016!

https://t.co/4wC7k1Lh54 Ref 3: Why ALL your pangolin samples were PCR negative? to avoid deep sequencing and accidentally reveal Paguma Larvata and Oryctolagus Cuniculus?

Unfortunately the "This work includes the identification of viral sequences in bat samples, and has resulted in the isolation of three bat SARS-related coronaviruses that are now used as reagents to test therapeutics and vaccines." were BEFORE the

chimeric infectious clone grants were there.https://t.co/DAArwFkz6v is in 2017, Rs4231.

https://t.co/UgXygDjYbW is in 2016, RsSHC014 and RsWIV16.

https://t.co/krO69CsJ94 is in 2013, RsWIV1. notice that this is before the beginning of the project

starting in 2016. Also remember that they told about only 3 isolates/live viruses. RsSHC014 is a live infectious clone that is just as alive as those other "Isolates".

P.D. somehow is able to use funds that he have yet recieved yet, and send results and sequences from late 2019 back in time into 2015,2013 and 2016!

https://t.co/4wC7k1Lh54 Ref 3: Why ALL your pangolin samples were PCR negative? to avoid deep sequencing and accidentally reveal Paguma Larvata and Oryctolagus Cuniculus?

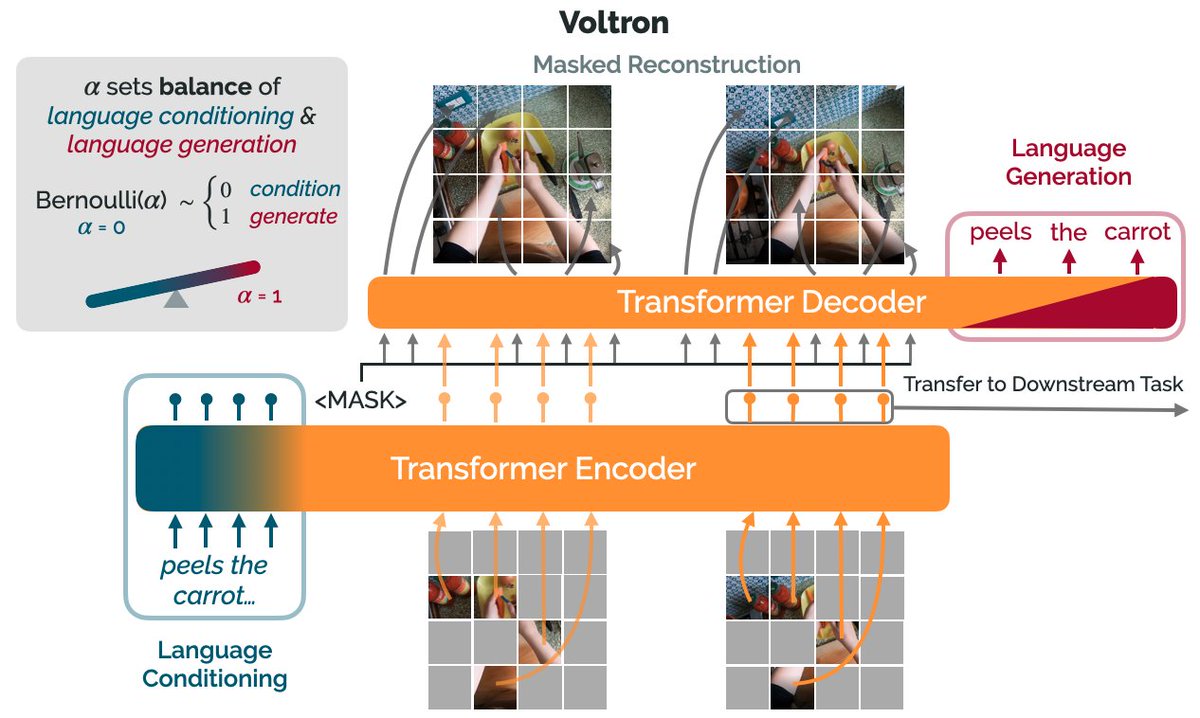

How can we use language supervision to learn better visual representations for robotics?

Introducing Voltron: Language-Driven Representation Learning for Robotics!

Paper: https://t.co/gIsRPtSjKz

Models: https://t.co/NOB3cpATYG

Evaluation: https://t.co/aOzQu95J8z

🧵👇(1 / 12)

Videos of humans performing everyday tasks (Something-Something-v2, Ego4D) offer a rich and diverse resource for learning representations for robotic manipulation.

Yet, an underused part of these datasets are the rich, natural language annotations accompanying each video. (2/12)

The Voltron framework offers a simple way to use language supervision to shape representation learning, building off of prior work in representations for robotics like MVP (https://t.co/Pb0mk9hb4i) and R3M (https://t.co/o2Fkc3fP0e).

The secret is *balance* (3/12)

Starting with a masked autoencoder over frames from these video clips, make a choice:

1) Condition on language and improve our ability to reconstruct the scene.

2) Generate language given the visual representation and improve our ability to describe what's happening. (4/12)

By trading off *conditioning* and *generation* we show that we can learn 1) better representations than prior methods, and 2) explicitly shape the balance of low and high-level features captured.

Why is the ability to shape this balance important? (5/12)

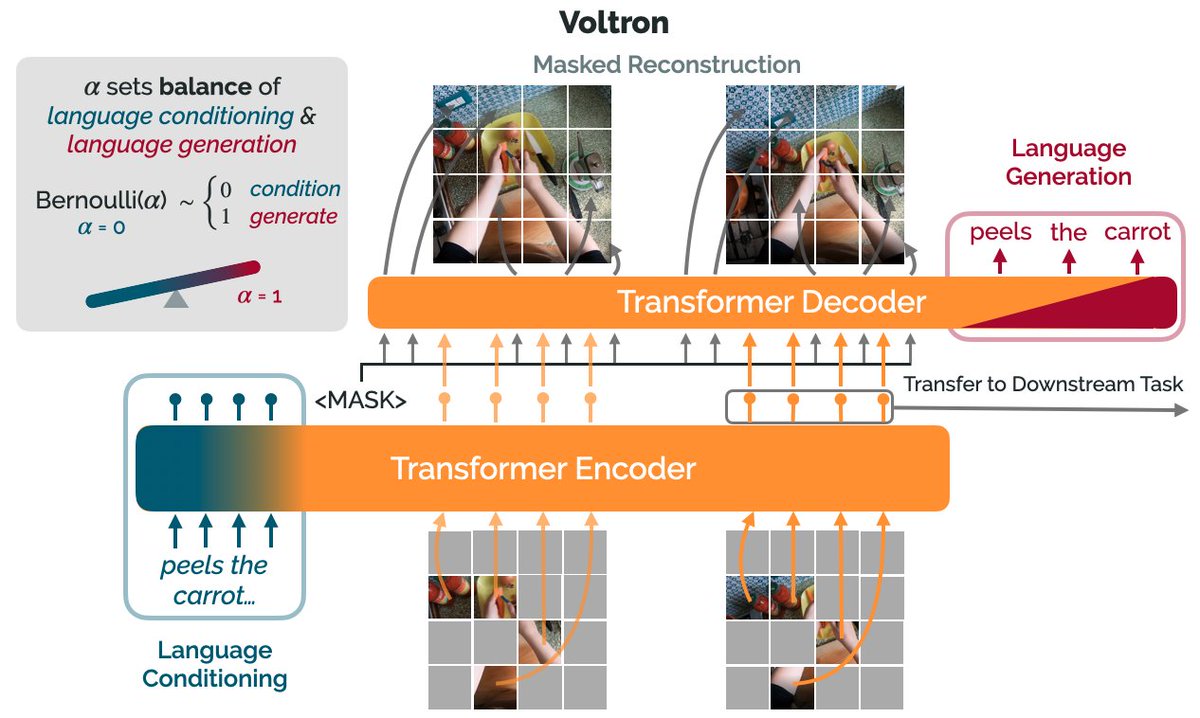

Introducing Voltron: Language-Driven Representation Learning for Robotics!

Paper: https://t.co/gIsRPtSjKz

Models: https://t.co/NOB3cpATYG

Evaluation: https://t.co/aOzQu95J8z

🧵👇(1 / 12)

Videos of humans performing everyday tasks (Something-Something-v2, Ego4D) offer a rich and diverse resource for learning representations for robotic manipulation.

Yet, an underused part of these datasets are the rich, natural language annotations accompanying each video. (2/12)

The Voltron framework offers a simple way to use language supervision to shape representation learning, building off of prior work in representations for robotics like MVP (https://t.co/Pb0mk9hb4i) and R3M (https://t.co/o2Fkc3fP0e).

The secret is *balance* (3/12)

Starting with a masked autoencoder over frames from these video clips, make a choice:

1) Condition on language and improve our ability to reconstruct the scene.

2) Generate language given the visual representation and improve our ability to describe what's happening. (4/12)

By trading off *conditioning* and *generation* we show that we can learn 1) better representations than prior methods, and 2) explicitly shape the balance of low and high-level features captured.

Why is the ability to shape this balance important? (5/12)