Or it's important to them that others believe that they're actually depressed, not "just sad."

I know some people who seem (to me) more concerned with receiving VALIDATION for their mental health issues than solving them.

They seem to care most about other people BELIEVING their problems are real.

I'm curious about this.

Or it's important to them that others believe that they're actually depressed, not "just sad."

If no one cuts you slack because of your mental health stuff, maybe you're in a really bad place, so you need people to believe you.

https://t.co/07v275i2gt

While she said some things I agree with, I rolled by eyes at her use of the word "trauma" because it seemed to me a transparent way to show political support to folks who's trauma narratives are important to...

I guess there's a general thing here: the more you can claim to have been harmed, the more likely people are to rally to support you.

I was once in a social dynamic where I would construct things such that I was visibly sad or put upon, because that was the only way I knew to revive (a type of) affection.

They need it, and they need it to be believed, because that's the only way they can feel loved and supported.

(Though that begs the question of WHY people have that need.)

The establishment certifies that this problem is HARD, you're not expected to just be able to trivially solve it. Which gives one protection against others' claims (or even demands) that you can and should.

Do you have a story for what's going on?

More from Eli Tyre

I think AI risk is a real existential concern, and I claim that the CritRat counterarguments that I've heard so far (keywords: universality, person, moral knowledge, education, etc.) don't hold up.

Anyone want to hash this out with

In general, I am super up for short (1 to 10 hour) adversarial collaborations.

— Eli Tyre (@EpistemicHope) December 23, 2020

If you think I'm wrong about something, and want to dig into the topic with me to find out what's up / prove me wrong, DM me.

For instance, while I heartily agree with lots of what is said in this video, I don't think that the conclusion about how to prevent (the bad kind of) human extinction, with regard to AGI, follows.

There are a number of reasons to think that AGI will be more dangerous than most people are, despite both people and AGIs being qualitatively the same sort of thing (explanatory knowledge-creating entities).

And, I maintain, that because of practical/quantitative (not fundamental/qualitative) differences, the development of AGI / TAI is very likely to destroy the world, by default.

(I'm not clear on exactly how much disagreement there is. In the video above, Deutsch says "Building an AGI with perverse emotions that lead it to immoral actions would be a crime."

I started by simply stating that I thought that the arguments that I had heard so far don't hold up, and seeing if anyone was interested in going into it in depth with

CritRats!

— Eli Tyre (@EpistemicHope) December 26, 2020

I think AI risk is a real existential concern, and I claim that the CritRat counterarguments that I've heard so far (keywords: universality, person, moral knowledge, education, etc.) don't hold up.

Anyone want to hash this out with me?https://t.co/Sdm4SSfQZv

So far, a few people have engaged pretty extensively with me, for instance, scheduling video calls to talk about some of the stuff, or long private chats.

(Links to some of those that are public at the bottom of the thread.)

But in addition to that, there has been a much more sprawling conversation happening on twitter, involving a much larger number of people.

Having talked to a number of people, I then offered a paraphrase of the basic counter that I was hearing from people of the Crit Rat persuasion.

ELI'S PARAPHRASE OF THE CRIT RAT STORY ABOUT AGI AND AI RISK

— Eli Tyre (@EpistemicHope) January 5, 2021

There are two things that you might call "AI".

The first is non-general AI, which is a program that follows some pre-set algorithm to solve a pre-set problem. This includes modern ML.

More from Health

\u201cMilitary history\u201d is only in decline if you\u2014like the author & experts in this obnoxious piece\u2014see the subject as a narrowly defined, white dude-oriented, guns & bayonets approach. The field is 1000% better off w/today\u2019s diversity of topics & historians. https://t.co/dUf3OWyVpQ

— Jonathan S. Jones (@_jonathansjones) February 1, 2021

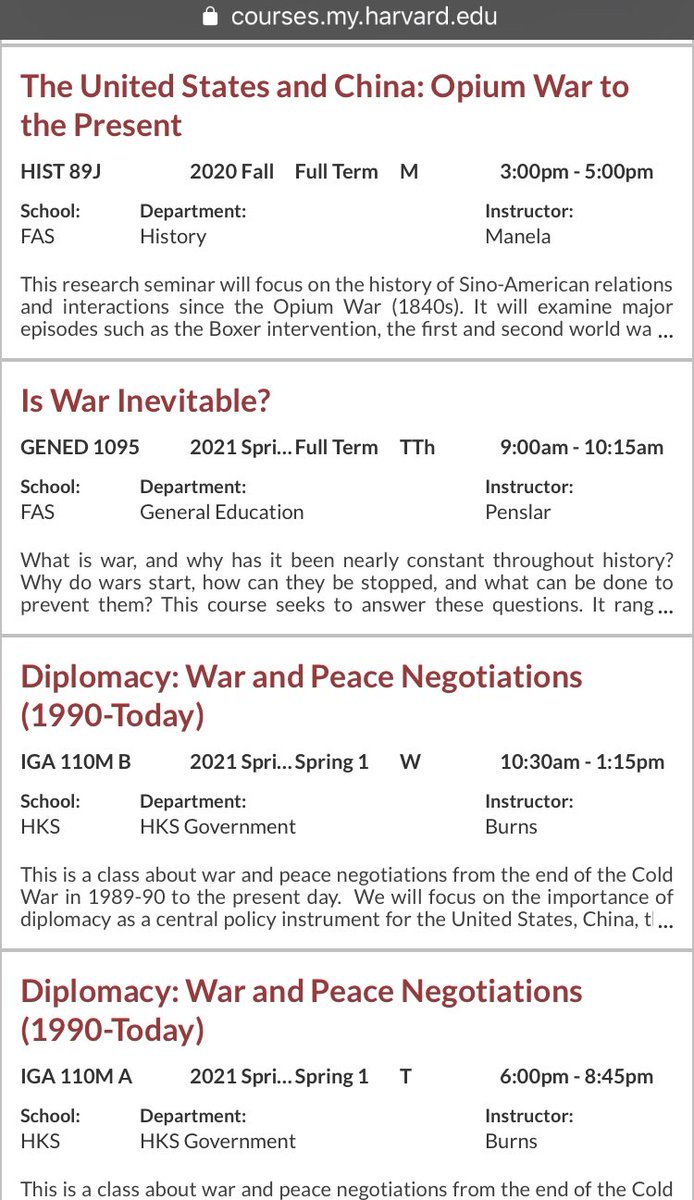

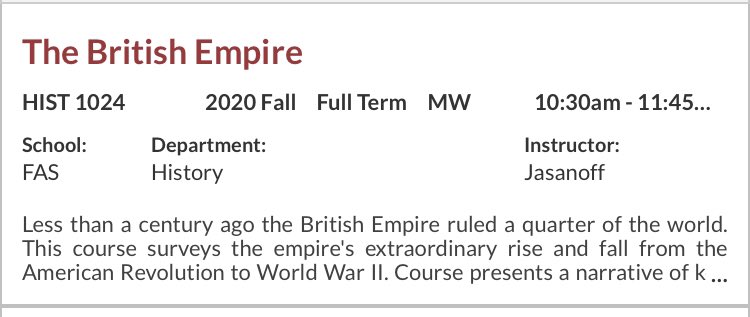

First off, Harvard students literally have multiple sections of military history that they can take listed. (It appears these ones are taught at MIT, so they might have to walk down the street for these) but... 2/

Say they want to stay on campus...they can only take numerous classes on war and diplomacy...3/

They have an entire class on Yalta. That’s right. An entire class on Yalta. 4/

But wait! There is more! They can take the British Empire, The Fall of the Roman Empire for those wanting traditional topics... 5/

Someone with decades of training gives someone with none advice usually packed into 1-3 mins. Huge amount is based on trust. Huge potential for bias built in. But also there is no obligation to provide real alternative options.

MAiD isn't eugenics. The task for the medical profession is to ensure informed consent. Failures on that front should result in enforcement of the law. But Bill C-7 is the result of the existing regime imposing unnecessary, unconstitutional harms by blocked access to MAiD.

— Emmett Macfarlane (@EmmMacfarlane) February 13, 2021

I am classified as 'gifted' (obnoxious and ableist term). I mention because of what I am about to say. You all know that I was an ambulatory wheelchair user previously - could stand - but contractures have ended that. When I pleaded for physio, turned down. But did you know...

I recently was chatting with a doctor I know and explaining what happened and the day the physiatrist told me it was too late and nothing could be done. The doctor asked if I'd like one of her friends/colleagues to give second opinion. I said yes please! So...

She said can you send me MRI and other imaging they did to determine it wasn't possible to address your contractures.

Me: What?

Dr.: They did a MRI first before deciding right?

Me: No

Dr: What did they do??!

Me: Examined me for 2 minutes.

Dr: I am very angry rn. Can't talk.

My point is you don't even know if you are making "informed" decisions because the only source of information you have is the person who has already decided what they think you should do. And may I remind you of a word called 'compliance.'