The YouTube algorithm that I helped build in 2011 still recommends the flat earth theory by the *hundreds of millions*. This investigation by @RawStory shows some of the real-life consequences of this badly designed AI.

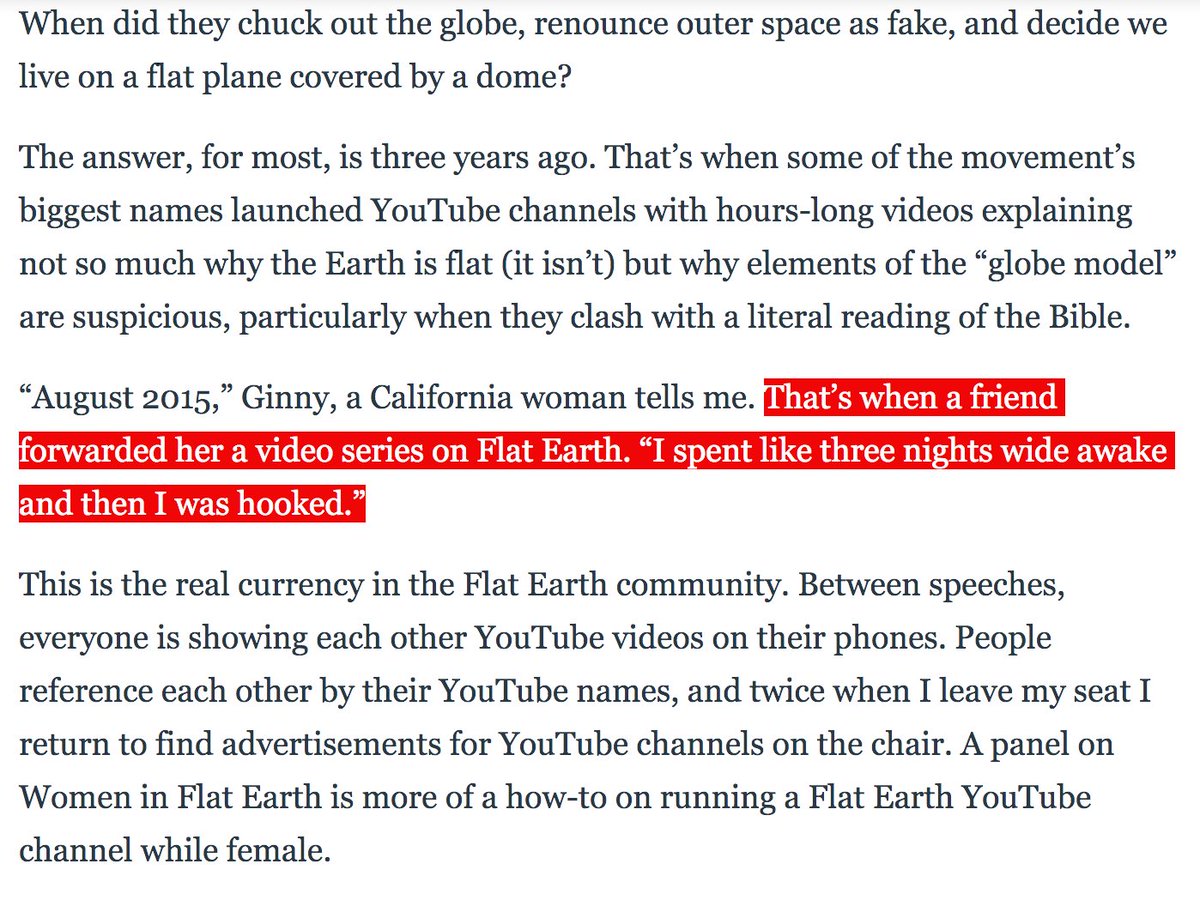

Flat Earth conference attendees explain how they have been brainwashed by YouTube and Infowarshttps://t.co/gqZwGXPOoc

— Raw Story (@RawStory) November 18, 2018

https://t.co/EKed0B1XhD

8/

https://t.co/yZpqdiJgsR

https://t.co/cqYz3SbDO8

https://t.co/M2mVtZRut9

https://t.co/myxPsrhlKa

Flat-earthers are the canaries in the coalmine /18

I think they're missing the point. 19/

With AI in charge of our information, we're facing a brand new, existential problem that concerns all of us. We need to develop tools to understand it better. 20/

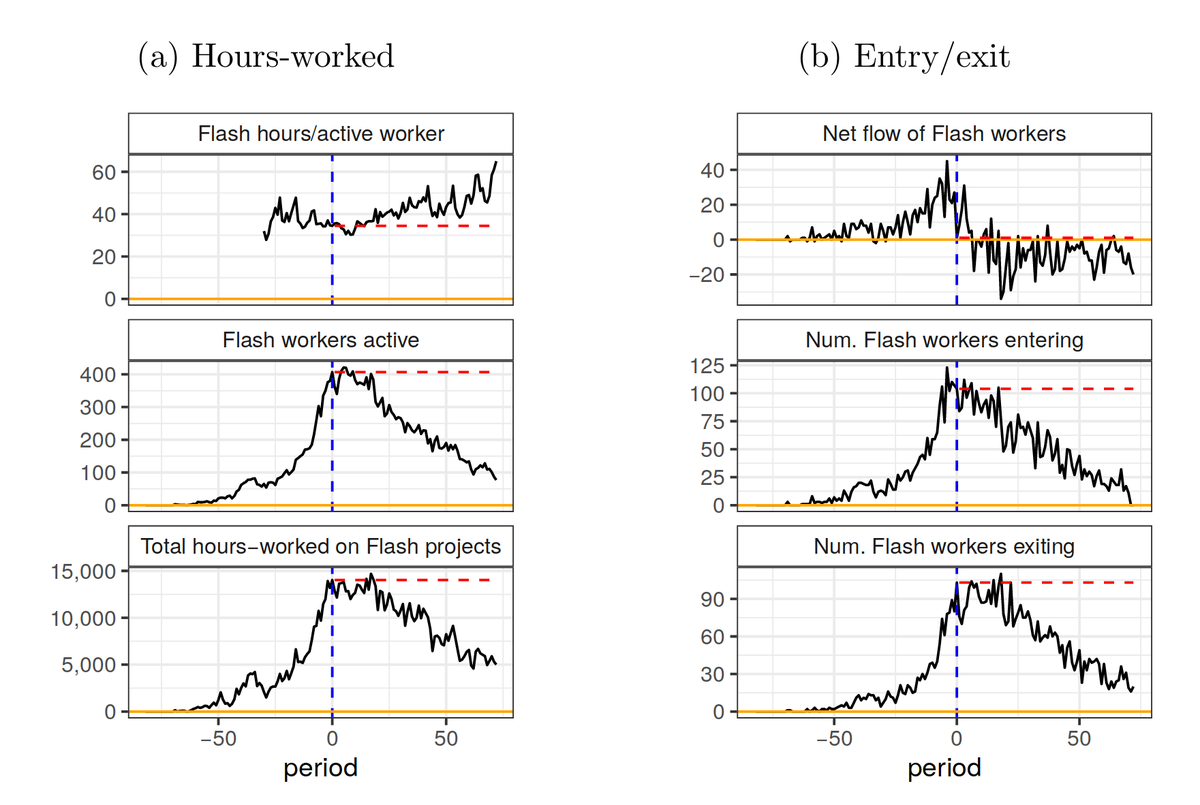

From the algorithm's point of view, flat earth is a gold mine.

Full article: https://t.co/LPjCKpbwXj

21/

https://t.co/LPjCKpbwXj 22/

More from Tech

Ok, I’ve told this story a few times, but maybe never here. Here we go. 🧵👇

I was about 6. I was in the car with my mother. We were driving a few hours from home to go to Orlando. My parents were letting me audition for a tv show. It would end up being my first job. I was very excited. But, in the meantime we drove and listened to Rush’s show.

There was some sort of trivia question they posed to the audience. I don’t remember what the riddle was, but I remember I knew the answer right away. It was phrased in this way that was somehow just simpler to see from a kid’s perspective. The answer was CAROUSEL. I was elated.

My mother was THRILLED. She insisted that we call Into the show using her “for emergencies only” giant cell phone. It was this phone:

I called in. The phone rang for a while, but someone answered. It was an impatient-sounding dude. The screener. I said I had the trivia answer. He wasn’t charmed, I could hear him rolling his eyes. He asked me what it was. I told him. “Please hold.”

Wish I had the audio of Rush Limbaugh telling me off on the phone on his show when I was six. In the meantime, RIP.

— Shannon Woodward (@shannonwoodward) February 17, 2021

I was about 6. I was in the car with my mother. We were driving a few hours from home to go to Orlando. My parents were letting me audition for a tv show. It would end up being my first job. I was very excited. But, in the meantime we drove and listened to Rush’s show.

There was some sort of trivia question they posed to the audience. I don’t remember what the riddle was, but I remember I knew the answer right away. It was phrased in this way that was somehow just simpler to see from a kid’s perspective. The answer was CAROUSEL. I was elated.

My mother was THRILLED. She insisted that we call Into the show using her “for emergencies only” giant cell phone. It was this phone:

I called in. The phone rang for a while, but someone answered. It was an impatient-sounding dude. The screener. I said I had the trivia answer. He wasn’t charmed, I could hear him rolling his eyes. He asked me what it was. I told him. “Please hold.”

You May Also Like

There are many strategies in market 📉and it's possible to get monthly 4% return consistently if you master 💪in one strategy .

One of those strategies which I like is Iron Fly✈️

Few important points on Iron fly stategy

This is fixed loss🔴 defined stategy ,so you are aware of your losses . You know your risk ⚠️and breakeven points to exit the positions.

Risk is defined , so at psychological🧠 level you are at peace🙋♀️

How to implement

1. Should be done on Tuesday or Wednesday for next week expiry after 1-2 pm

2. Take view of the market ,looking at daily chart

3. Then do weekly iron fly.

4. No need to hold this till expiry day .

5.Exit it one day before expiry or when you see more than 2% within the week.

5. High vix is preferred for iron fly

6. Can be executed with less capital of 3-5 lakhs .

https://t.co/MYDgWkjYo8 have R:2R so over all it should be good.

8. If you are able to get 6% return monthly ,it means close to 100% return on your capital per annum.

One of those strategies which I like is Iron Fly✈️

Few important points on Iron fly stategy

This is fixed loss🔴 defined stategy ,so you are aware of your losses . You know your risk ⚠️and breakeven points to exit the positions.

Risk is defined , so at psychological🧠 level you are at peace🙋♀️

How to implement

1. Should be done on Tuesday or Wednesday for next week expiry after 1-2 pm

2. Take view of the market ,looking at daily chart

3. Then do weekly iron fly.

4. No need to hold this till expiry day .

5.Exit it one day before expiry or when you see more than 2% within the week.

5. High vix is preferred for iron fly

6. Can be executed with less capital of 3-5 lakhs .

https://t.co/MYDgWkjYo8 have R:2R so over all it should be good.

8. If you are able to get 6% return monthly ,it means close to 100% return on your capital per annum.