thread--- A lot of guys like to say things like "the GOP's role is to funnel opposition into a harmless direction" and that is true in a function sense, that's what has happened. But the GOP imo has no actual role in The System. They are on the outside

but did you know Trump 2016 spent less than Romney in 2012 ($840 million)

More from All

Tip from the Monkey

Pangolins, September 2019 and PLA are the key to this mystery

Stay Tuned!

1. Yang

2. A jacobin capuchin dangling a flagellin pangolin on a javelin while playing a mandolin and strangling a mannequin on a paladin's palanquin, said Saladin

More to come tomorrow!

3. Yigang Tong

https://t.co/CYtqYorhzH

Archived: https://t.co/ncz5ruwE2W

4. YT Interview

Some bats & pangolins carry viruses related with SARS-CoV-2, found in SE Asia and in Yunnan, & the pangolins carrying SARS-CoV-2 related viruses were smuggled from SE Asia, so there is a possibility that SARS-CoV-2 were coming from

Pangolins, September 2019 and PLA are the key to this mystery

Stay Tuned!

1. Yang

Meet Yang Ruifu, CCP's biological weapons expert https://t.co/JjB9TLEO95 via @Gnews202064

— Billy Bostickson \U0001f3f4\U0001f441&\U0001f441 \U0001f193 (@BillyBostickson) October 11, 2020

Interesting expose of China's top bioweapons expert who oversaw fake pangolin research

Paper 1: https://t.co/TrXESKLYmJ

Paper 2:https://t.co/9LSJTNCn3l

Pangolinhttps://t.co/2FUAzWyOcv pic.twitter.com/I2QMXgnkBJ

2. A jacobin capuchin dangling a flagellin pangolin on a javelin while playing a mandolin and strangling a mannequin on a paladin's palanquin, said Saladin

More to come tomorrow!

3. Yigang Tong

https://t.co/CYtqYorhzH

Archived: https://t.co/ncz5ruwE2W

4. YT Interview

Some bats & pangolins carry viruses related with SARS-CoV-2, found in SE Asia and in Yunnan, & the pangolins carrying SARS-CoV-2 related viruses were smuggled from SE Asia, so there is a possibility that SARS-CoV-2 were coming from

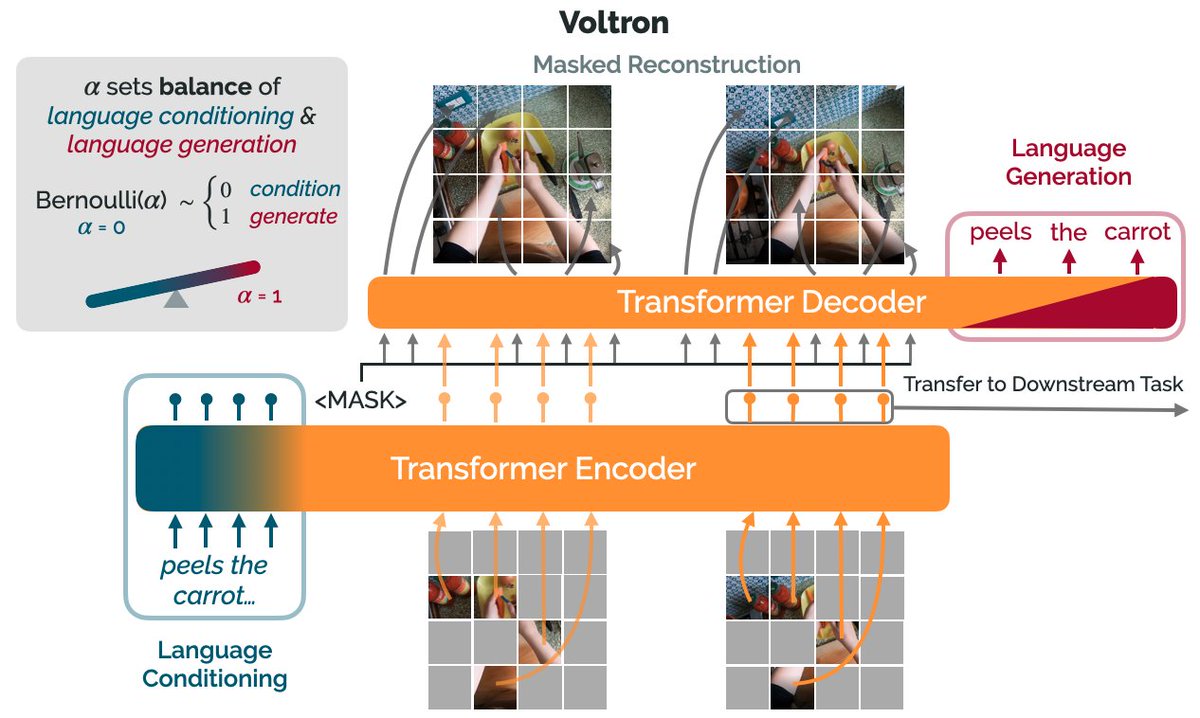

How can we use language supervision to learn better visual representations for robotics?

Introducing Voltron: Language-Driven Representation Learning for Robotics!

Paper: https://t.co/gIsRPtSjKz

Models: https://t.co/NOB3cpATYG

Evaluation: https://t.co/aOzQu95J8z

🧵👇(1 / 12)

Videos of humans performing everyday tasks (Something-Something-v2, Ego4D) offer a rich and diverse resource for learning representations for robotic manipulation.

Yet, an underused part of these datasets are the rich, natural language annotations accompanying each video. (2/12)

The Voltron framework offers a simple way to use language supervision to shape representation learning, building off of prior work in representations for robotics like MVP (https://t.co/Pb0mk9hb4i) and R3M (https://t.co/o2Fkc3fP0e).

The secret is *balance* (3/12)

Starting with a masked autoencoder over frames from these video clips, make a choice:

1) Condition on language and improve our ability to reconstruct the scene.

2) Generate language given the visual representation and improve our ability to describe what's happening. (4/12)

By trading off *conditioning* and *generation* we show that we can learn 1) better representations than prior methods, and 2) explicitly shape the balance of low and high-level features captured.

Why is the ability to shape this balance important? (5/12)

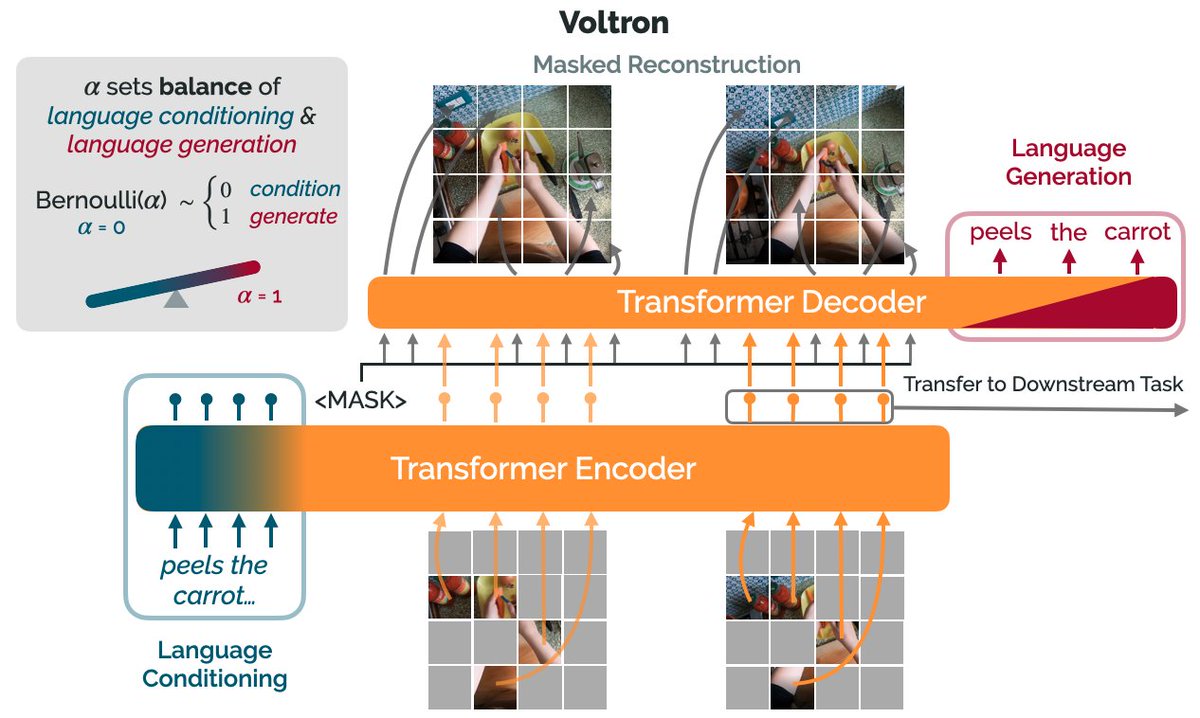

Introducing Voltron: Language-Driven Representation Learning for Robotics!

Paper: https://t.co/gIsRPtSjKz

Models: https://t.co/NOB3cpATYG

Evaluation: https://t.co/aOzQu95J8z

🧵👇(1 / 12)

Videos of humans performing everyday tasks (Something-Something-v2, Ego4D) offer a rich and diverse resource for learning representations for robotic manipulation.

Yet, an underused part of these datasets are the rich, natural language annotations accompanying each video. (2/12)

The Voltron framework offers a simple way to use language supervision to shape representation learning, building off of prior work in representations for robotics like MVP (https://t.co/Pb0mk9hb4i) and R3M (https://t.co/o2Fkc3fP0e).

The secret is *balance* (3/12)

Starting with a masked autoencoder over frames from these video clips, make a choice:

1) Condition on language and improve our ability to reconstruct the scene.

2) Generate language given the visual representation and improve our ability to describe what's happening. (4/12)

By trading off *conditioning* and *generation* we show that we can learn 1) better representations than prior methods, and 2) explicitly shape the balance of low and high-level features captured.

Why is the ability to shape this balance important? (5/12)