You were almost certainly thinking "WHY is this like this?", not "What is a one-line summary of what happened in this commit?".

Most commit messages are next to useless because they focus on WHAT was done instead of WHY.

This is exactly the wrong thing to focus on.

You can always reconstruct what changes a commit contains, but it's near impossible to unearth the reason it was done.

(thread)

You were almost certainly thinking "WHY is this like this?", not "What is a one-line summary of what happened in this commit?".

```

[one line-summary of changes]

Because:

- [relevant context]

- [why you decided to change things]

- [reason you're doing it now]

This commit:

- [does X]

- [does Y]

- [does Z]

```

First, it captures context that will be near impossible to recover later. Trust me, this stuff is gold.

Secondly, if you train yourself to ask why you're making every change, you'll tend to make better changes.

The first time you see a commit message like the above instead of "refactor OrderWidget", you'll be a convert.

https://t.co/8e9p3x0zb0

https://t.co/KrOvHJPMXg

https://t.co/rnWpApDrTx

https://t.co/R7tAV3b8rx

More from Tech

Thought I'd put a thread together of some resources & people I consider really valuable & insightful for anyone considering or just starting out on their @SorareHQ journey. It's by no means comprehensive, this community is super helpful so no offence to anyone I've missed off...

1) Get yourself on the official Sorare Discord group https://t.co/1CWeyglJhu, the forum is always full of interesting debate. Got a question? Put it on the relevant thread & it's usually answered in minutes. This is also a great place to engage directly with the @SorareHQ team.

2) Bury your head in @HGLeitch's @SorareData & get to grips with all the collated information you have to hand FOR FREE! IMO it's vital for price-checking, scouting & S05 team building plus they are hosts to the forward thinking SO11 and SorareData Cups 🏆

3) Get on YouTube 📺, subscribe to @Qu_Tang_Clan's channel https://t.co/1ZxMsQR1kq & engross yourself in hours of Sorare tutorials & videos. There's a good crowd that log in to the live Gameweek shows where you get to see Quinny scratching his head/ beard over team selection.

4) Make sure to follow & give a listen to the @Sorare_Podcast on the streaming service of your choice 🔊, weekly shows are always insightful with great guests. Worth listening to the old episodes too as there's loads of information you'll take from them.

1) Get yourself on the official Sorare Discord group https://t.co/1CWeyglJhu, the forum is always full of interesting debate. Got a question? Put it on the relevant thread & it's usually answered in minutes. This is also a great place to engage directly with the @SorareHQ team.

2) Bury your head in @HGLeitch's @SorareData & get to grips with all the collated information you have to hand FOR FREE! IMO it's vital for price-checking, scouting & S05 team building plus they are hosts to the forward thinking SO11 and SorareData Cups 🏆

3) Get on YouTube 📺, subscribe to @Qu_Tang_Clan's channel https://t.co/1ZxMsQR1kq & engross yourself in hours of Sorare tutorials & videos. There's a good crowd that log in to the live Gameweek shows where you get to see Quinny scratching his head/ beard over team selection.

4) Make sure to follow & give a listen to the @Sorare_Podcast on the streaming service of your choice 🔊, weekly shows are always insightful with great guests. Worth listening to the old episodes too as there's loads of information you'll take from them.

You May Also Like

So friends here is the thread on the recommended pathway for new entrants in the stock market.

Here I will share what I believe are essentials for anybody who is interested in stock markets and the resources to learn them, its from my experience and by no means exhaustive..

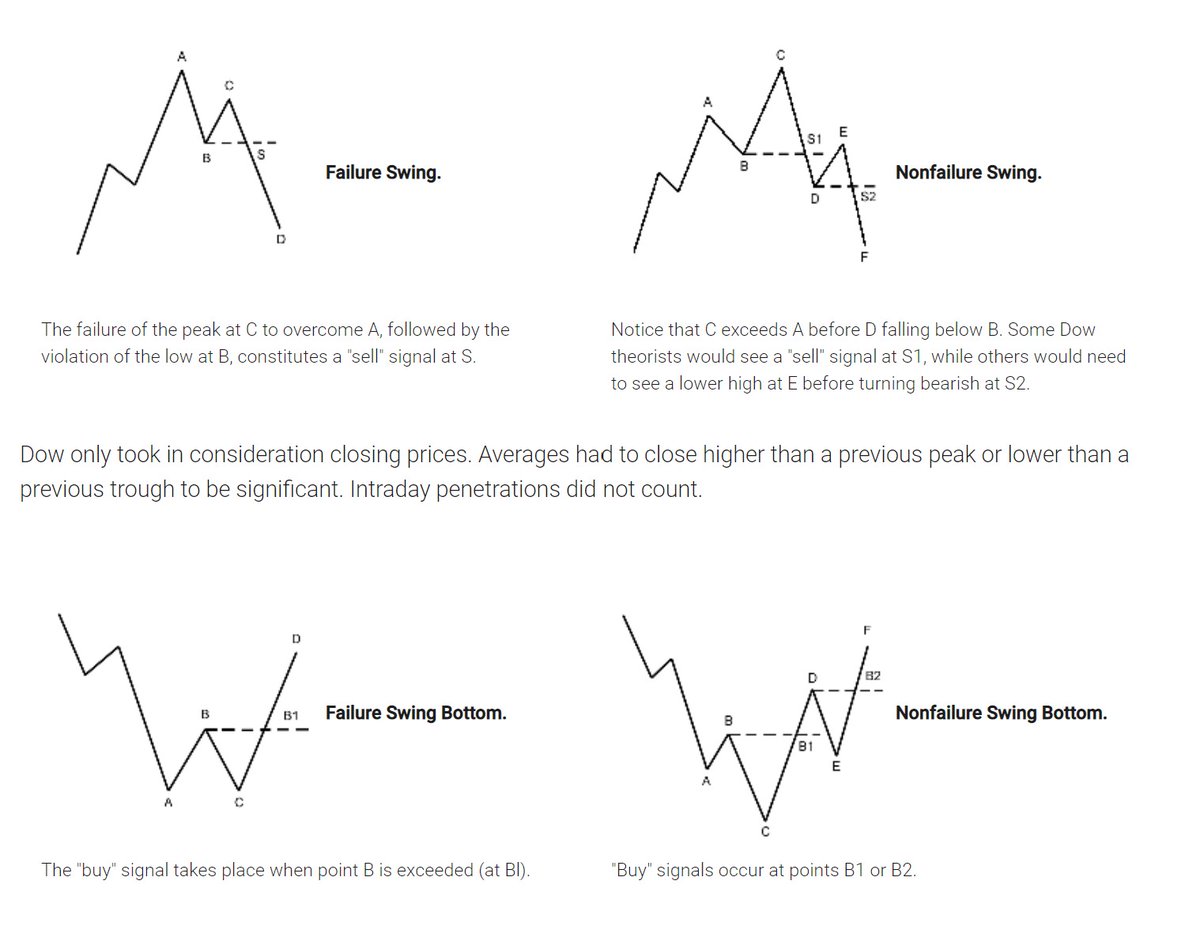

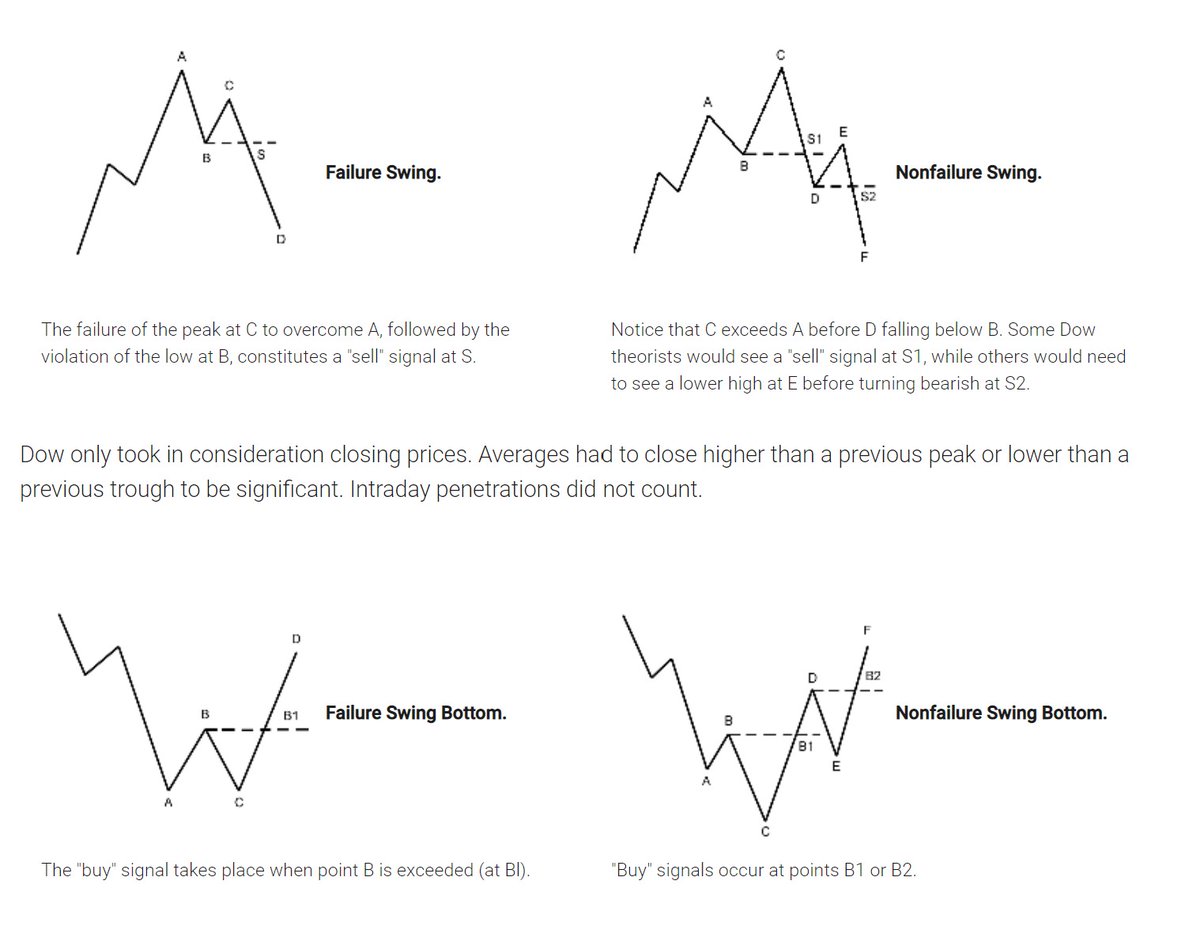

First the very basic : The Dow theory, Everybody must have basic understanding of it and must learn to observe High Highs, Higher Lows, Lower Highs and Lowers lows on charts and their

Even those who are more inclined towards fundamental side can also benefit from Dow theory, as it can hint start & end of Bull/Bear runs thereby indication entry and exits.

Next basic is Wyckoff's Theory. It tells how accumulation and distribution happens with regularity and how the market actually

Dow theory is old but

Here I will share what I believe are essentials for anybody who is interested in stock markets and the resources to learn them, its from my experience and by no means exhaustive..

First the very basic : The Dow theory, Everybody must have basic understanding of it and must learn to observe High Highs, Higher Lows, Lower Highs and Lowers lows on charts and their

Even those who are more inclined towards fundamental side can also benefit from Dow theory, as it can hint start & end of Bull/Bear runs thereby indication entry and exits.

Next basic is Wyckoff's Theory. It tells how accumulation and distribution happens with regularity and how the market actually

Dow theory is old but

Old is Gold....

— Professor (@DillikiBiili) January 23, 2020

this Bharti Airtel chart is a true copy of the Wyckoff Pattern propounded in 1931....... pic.twitter.com/tQ1PNebq7d