Authors Elvis

7 days

30 days

All time

Recent

Popular

I have always emphasized on the importance of mathematics in machine learning.

Here is a compilation of resources (books, videos & papers) to get you going.

(Note: It's not an exhaustive list but I have carefully curated it based on my experience and observations)

📘 Mathematics for Machine Learning

by Marc Peter Deisenroth, A. Aldo Faisal, and Cheng Soon Ong

https://t.co/zSpp67kJSg

Note: this is probably the place you want to start. Start slowly and work on some examples. Pay close attention to the notation and get comfortable with it.

📘 Pattern Recognition and Machine Learning

by Christopher Bishop

Note: Prior to the book above, this is the book that I used to recommend to get familiar with math-related concepts used in machine learning. A very solid book in my view and it's heavily referenced in academia.

📘 The Elements of Statistical Learning

by Jerome H. Friedman, Robert Tibshirani, and Trevor Hastie

Mote: machine learning deals with data and in turn uncertainty which is what statistics teach. Get comfortable with topics like estimators, statistical significance,...

📘 Probability Theory: The Logic of Science

by E. T. Jaynes

Note: In machine learning, we are interested in building probabilistic models and thus you will come across concepts from probability theory like conditional probability and different probability distributions.

Here is a compilation of resources (books, videos & papers) to get you going.

(Note: It's not an exhaustive list but I have carefully curated it based on my experience and observations)

📘 Mathematics for Machine Learning

by Marc Peter Deisenroth, A. Aldo Faisal, and Cheng Soon Ong

https://t.co/zSpp67kJSg

Note: this is probably the place you want to start. Start slowly and work on some examples. Pay close attention to the notation and get comfortable with it.

📘 Pattern Recognition and Machine Learning

by Christopher Bishop

Note: Prior to the book above, this is the book that I used to recommend to get familiar with math-related concepts used in machine learning. A very solid book in my view and it's heavily referenced in academia.

📘 The Elements of Statistical Learning

by Jerome H. Friedman, Robert Tibshirani, and Trevor Hastie

Mote: machine learning deals with data and in turn uncertainty which is what statistics teach. Get comfortable with topics like estimators, statistical significance,...

📘 Probability Theory: The Logic of Science

by E. T. Jaynes

Note: In machine learning, we are interested in building probabilistic models and thus you will come across concepts from probability theory like conditional probability and different probability distributions.

Amazing resources to start learning MLOps, one of the most exciting areas in machine learning engineering:

↓

📘 Introducing MLOps

An excellent primer to MLOps and how to scale machine learning in the enterprise.

https://t.co/GCnbZZaQEI

🎓 Machine Learning Engineering for Production (MLOps) Specialization

A new specialization by https://t.co/mEjqoGrnTW on machine learning engineering for production (MLOPs).

https://t.co/MAaiRlRRE7

⚙️ MLOps Tooling Landscape

A great blog post by Chip Huyen summarizing all the latest technologies/tools used in MLOps.

https://t.co/hsDH8DVloH

🎓 MLOps Course by Goku Mohandas

A series of lessons teaching how to apply machine learning to build production-grade products.

https://t.co/RrV3GNNsLW

↓

📘 Introducing MLOps

An excellent primer to MLOps and how to scale machine learning in the enterprise.

https://t.co/GCnbZZaQEI

🎓 Machine Learning Engineering for Production (MLOps) Specialization

A new specialization by https://t.co/mEjqoGrnTW on machine learning engineering for production (MLOPs).

https://t.co/MAaiRlRRE7

⚙️ MLOps Tooling Landscape

A great blog post by Chip Huyen summarizing all the latest technologies/tools used in MLOps.

https://t.co/hsDH8DVloH

🎓 MLOps Course by Goku Mohandas

A series of lessons teaching how to apply machine learning to build production-grade products.

https://t.co/RrV3GNNsLW

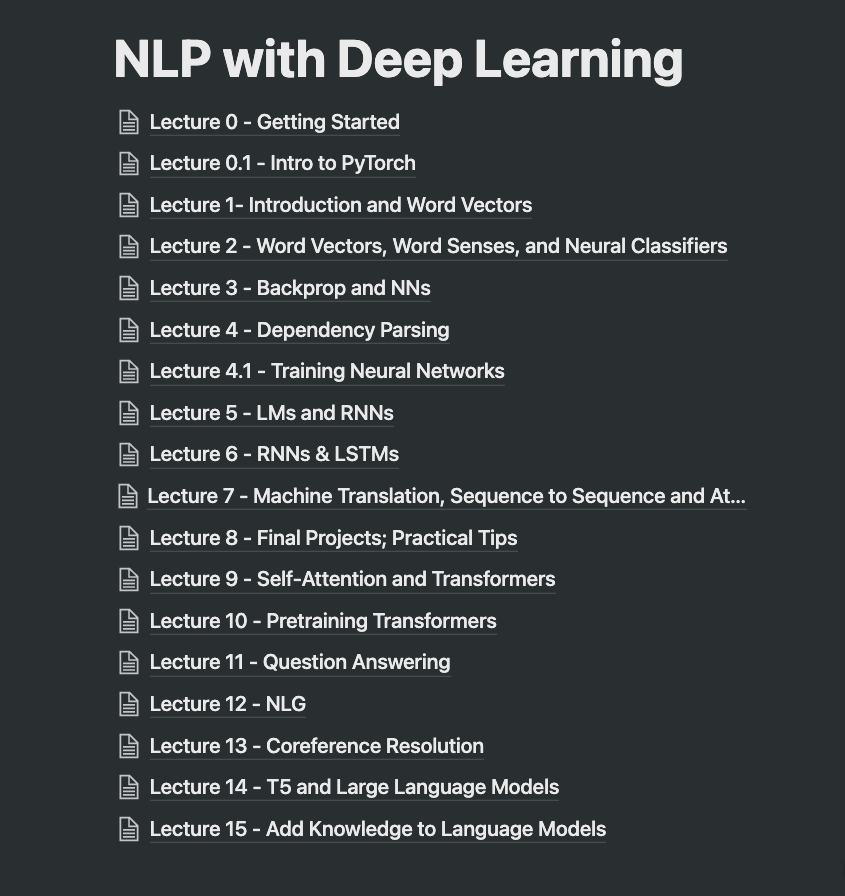

The past month I've been writing detailed notes for the first 15 lectures of Stanford's NLP with Deep Learning. Notes contain code, equations, practical tips, references, etc.

As I tidy the notes, I need to figure out how to best publish them. Here are the topics covered so far:

I know there are a lot of you interested in these from what I gathered 1 month ago. I want to make sure they are high quality before publishing, so I will spend some time working on that. Stay

Below is the course I've been auditing. My advice is you take it slow, there are some advanced concepts in the lectures. It took me 1 month (~3 hrs a day) to take rough notes for the first 15 lectures. Note that this is one semester of

I'm super excited about this project because my plan is to make the content more accessible so that a beginner can consume it more easily. It's tiring but I will keep at it because I know many of you will enjoy and find them useful. More announcements coming soon!

NLP is evolving so fast, so one idea with these notes is to create a live document that could be easily maintained by the community. Something like what we did before with NLP Overview: https://t.co/Y8Z1Svjn24

Let me know if you have any thoughts on this?

As I tidy the notes, I need to figure out how to best publish them. Here are the topics covered so far:

I know there are a lot of you interested in these from what I gathered 1 month ago. I want to make sure they are high quality before publishing, so I will spend some time working on that. Stay

I've been writing notes for the latest Deep Learning for NLP course by Stanford.

— elvis (@omarsar0) January 14, 2022

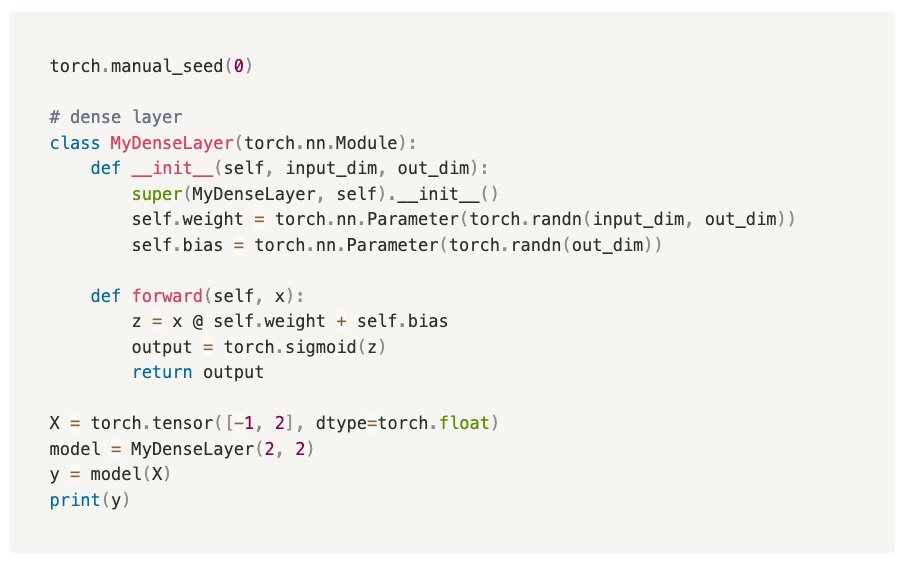

For fun, I also started to add my own code snippets into the notes. I think this is a more efficient way to study: theory + code.

Plan to share these notes soon. Stay tuned! pic.twitter.com/hWzZDORbl6

Below is the course I've been auditing. My advice is you take it slow, there are some advanced concepts in the lectures. It took me 1 month (~3 hrs a day) to take rough notes for the first 15 lectures. Note that this is one semester of

I'm super excited about this project because my plan is to make the content more accessible so that a beginner can consume it more easily. It's tiring but I will keep at it because I know many of you will enjoy and find them useful. More announcements coming soon!

NLP is evolving so fast, so one idea with these notes is to create a live document that could be easily maintained by the community. Something like what we did before with NLP Overview: https://t.co/Y8Z1Svjn24

Let me know if you have any thoughts on this?