Prepare for constant battle.

Marketing for freelancers.

Everything I’ve learned.

A thread.

- Getting clients

- Pricing projects

- Negotiation

All the hard stuff, made easier.

- The clients you work with

- The projects you work on

- The pay you receive

All the important stuff, made better.

When demand outweighs supply, freelancing is freeing.

- Developer

- Designer

- Or writer

But the best doesn’t always get:

- The most work

- The best clients

- The highest pay

The perceived best often does.

This is when you’re at your most vulnerable.

Because you get complacent.

You might be busy now, but what about next week/month/year?

- Outsource

- Collaborate

- Pay forward

It’s a good problem to have, not a bad one.

Getting them to ask you is effective long-term.

- Make friends with people

- Demonstrate specific expertise

- Show proof of work

- Come recommended

- Do a good job

- Be discoverable (online and offline)

- Be authentic (don't fabricate)

- Be transparent (show results)

- Be accessible (help people)

- Be relatable (tell your story)

- Be brilliant (do great work)

- Be useful (spread value)

A good website with solid content opens up opportunities from all angles.

Makes you nothing to no-none.

Pick a position and stick to it.

- What’s in-demand

- What you’re best at

- What you enjoy most

- What makes you different

- What you can do for a long time

Look for the overlaps.

- Who are they?

- What do they buy?

- What are their problems?

- Where do they hang out?

And shiny object syndrome kills.

Stick to the plan and go long.

- Your website

- Your content

- Your email list

- Your social media accounts

Spend time curating them all.

Replace them with “this will”, “I can” and “I’m going to”.

More from All

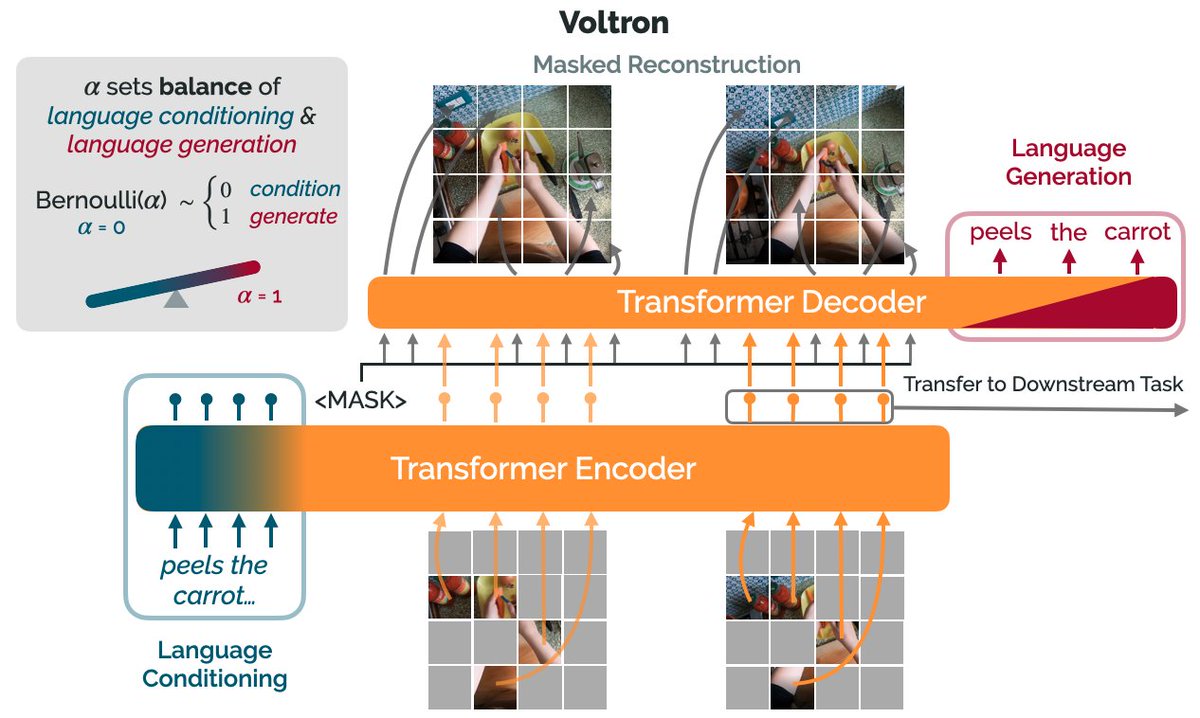

How can we use language supervision to learn better visual representations for robotics?

Introducing Voltron: Language-Driven Representation Learning for Robotics!

Paper: https://t.co/gIsRPtSjKz

Models: https://t.co/NOB3cpATYG

Evaluation: https://t.co/aOzQu95J8z

🧵👇(1 / 12)

Videos of humans performing everyday tasks (Something-Something-v2, Ego4D) offer a rich and diverse resource for learning representations for robotic manipulation.

Yet, an underused part of these datasets are the rich, natural language annotations accompanying each video. (2/12)

The Voltron framework offers a simple way to use language supervision to shape representation learning, building off of prior work in representations for robotics like MVP (https://t.co/Pb0mk9hb4i) and R3M (https://t.co/o2Fkc3fP0e).

The secret is *balance* (3/12)

Starting with a masked autoencoder over frames from these video clips, make a choice:

1) Condition on language and improve our ability to reconstruct the scene.

2) Generate language given the visual representation and improve our ability to describe what's happening. (4/12)

By trading off *conditioning* and *generation* we show that we can learn 1) better representations than prior methods, and 2) explicitly shape the balance of low and high-level features captured.

Why is the ability to shape this balance important? (5/12)

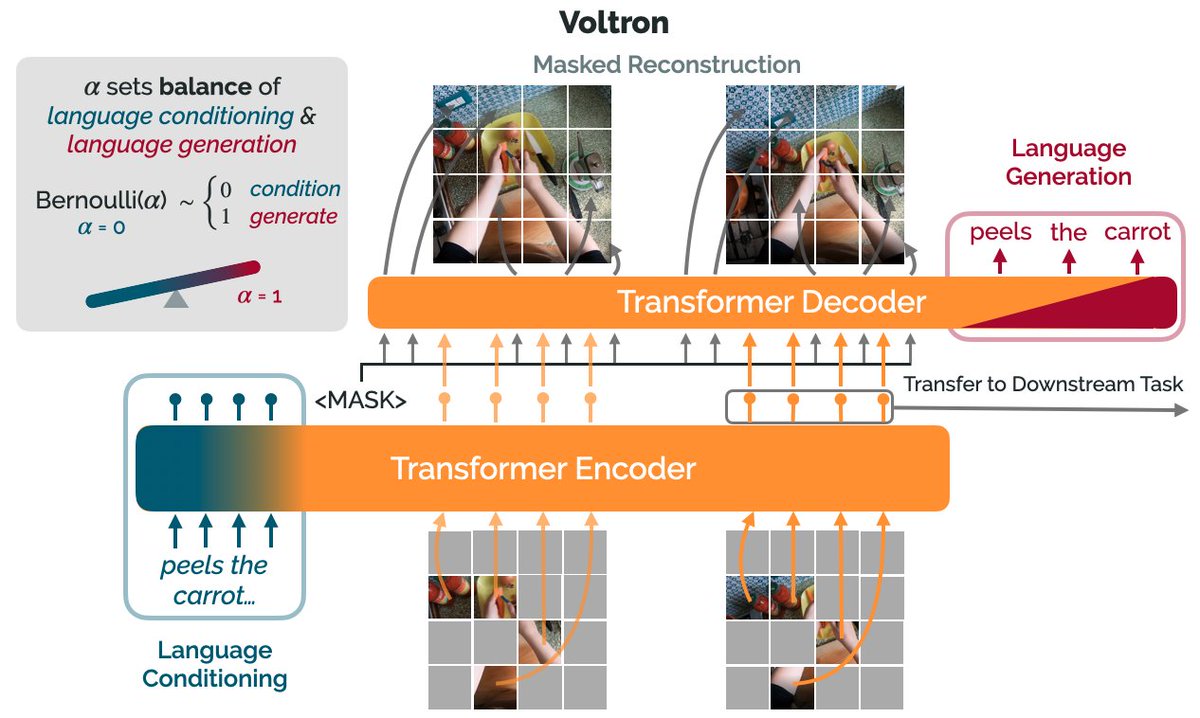

Introducing Voltron: Language-Driven Representation Learning for Robotics!

Paper: https://t.co/gIsRPtSjKz

Models: https://t.co/NOB3cpATYG

Evaluation: https://t.co/aOzQu95J8z

🧵👇(1 / 12)

Videos of humans performing everyday tasks (Something-Something-v2, Ego4D) offer a rich and diverse resource for learning representations for robotic manipulation.

Yet, an underused part of these datasets are the rich, natural language annotations accompanying each video. (2/12)

The Voltron framework offers a simple way to use language supervision to shape representation learning, building off of prior work in representations for robotics like MVP (https://t.co/Pb0mk9hb4i) and R3M (https://t.co/o2Fkc3fP0e).

The secret is *balance* (3/12)

Starting with a masked autoencoder over frames from these video clips, make a choice:

1) Condition on language and improve our ability to reconstruct the scene.

2) Generate language given the visual representation and improve our ability to describe what's happening. (4/12)

By trading off *conditioning* and *generation* we show that we can learn 1) better representations than prior methods, and 2) explicitly shape the balance of low and high-level features captured.

Why is the ability to shape this balance important? (5/12)